Biolinguistics

Biolinguistics can be defined as the study of biology and the evolution of language. It is highly interdisciplinary as it is related to various fields such as biology, linguistics, psychology, anthropology, mathematics, and neurolinguistics to explain the formation of language. It seeks to yield a framework by which we can understand the fundamentals of the faculty of language. This field was first introduced by Massimo Piattelli-Palmarini, professor of Linguistics and Cognitive Science at the University of Arizona. It was first introduced in 1971, at an international meeting at the Massachusetts Institute of Technology (MIT).

Biolinguistics, also called the biolinguistic enterprise or the biolinguistic approach, is believed to have its origins in Noam Chomsky's and Eric Lenneberg's work on language acquisition that began in the 1950s as a reaction to the then-dominant behaviorist paradigm. Fundamentally, biolinguistics challenges the view of human language acquisition as a behavior based on stimulus-response interactions and associations.[1] Chomsky and Lenneberg militated against it by arguing for the innate knowledge of language. Chomsky in 1960s proposed the Language Acquisition Device (LAD) as a hypothetical tool for language acquisition that only humans are born with. Similarly, Lenneberg (1967)[2] formulated the Critical Period Hypothesis, the main idea of which being that language acquisition is biologically constrained. These works were regarded as pioneers in the shaping of biolinguistic thought, in what was the beginning of a change in paradigm in the study of language.[3]

Origins of biolinguistics

[edit]The investigation of the biological foundations of language is associated with two historical periods, namely that of the 19th century (primarily via Darwinian evolutionary theory) and the 20th century (primarily via the integration of the mathematical linguistics (in the form of Chomskyan generative grammar) with neuroscience.

19th century: Darwin's theory of evolution

[edit]

Darwinism inspired many researchers to study language, in particular the evolution of language, via the lens of biology. Darwin's theory regarding the origin of language attempts to answer three important questions:[4]

- Did individuals undergo something like selection as they evolved?

- Did selection play a role in producing the capacity for language in humans?

- If selection did play a role, was selection primarily responsible for the emergence of language, was it just one of the several contributing causes?

Dating back to 1821, German linguist August Schleicher was the representative pioneer of biolinguistics, discussing the evolution of language based on Darwin's theory of evolution. Since linguistics had been believed to be a form of historical science under the influence of the Société de Linguistique de Paris, speculations of the origin of language were not permitted.[5] As a result, hardly did any prominent linguist write about the origin of language apart from German linguist Hugo Schuchardt. Darwinism addressed the arguments of other researchers and scholars much as Max Müller by arguing that language use, while requiring a certain mental capacity, also stimulates brain development, enabling long trains of thought and strengthening power. Darwin drew an extended analogy between the evolution of languages and species, noting in each domain the presence of rudiments, of crossing and blending, and variation, and remarking on how each development gradually through a process of struggle.[6]

20th century: Biological foundation of language

[edit]The first phase in the development of biolinguistics runs through the late 1960s with the publication of Lennberg's Biological Foundation of Language (1967). During the first phase, work focused on:

- specifying the boundary conditions for human language as a system of cognition;

- language development as it presents itself in the acquisition sequence that children go through when they learn a language

- genetics of language disorders that create specific language disabilities, including dyslexia and deafness)

- language evolution.

During this period, the greatest progress was made in coming to a better understanding of the defining properties of human language as a system of cognition. Three landmark events shaped the modern field of biolinguistics: two important conferences were convened in the 1970s, and a retrospective article was published in 1997 by Lyle Jenkins.

- 1974: The first official biolinguistic conference was organized by him in 1974, bringing together evolutionary biologists, neuroscientists, linguists, and others interested in the development of language in the individual, its origins and evolution.[7]

- 1976: another conference was held by the New York Academy of Science, after which numerous works on the origin of language were published.[8]

- 1997: For the 40th anniversary of transformational-generative grammar, Lyle Jenkins wrote an article titled "Biolinguistics: Structure development and evolution of language".[9]

The second phase began in the late 1970s . In 1976 Chomsky formulated the fundamental questions of biolinguistics as follows: i) function, ii) structure, iii) physical basis, iv) development in the individual, v) evolutionary development. In the late 1980s a great deal of progress was made in answering questions about the development of language. This then prompted further questions about language design, function, and, the evolution of language. The following year, Juan Uriagereka, a graduate student of Howard Lasnik, wrote the introductory text to Minimalist Syntax, Rhyme and Reason. Their work renewed interest in biolinguistics, catalysing many linguists to look into biolinguistics with their colleagues in adjacent scientific disciplines.[10] Both Jenkins and Uriagereka stressed the importance of addressing the emergence of the language faculty in humans. At around the same time, geneticists discovered a link between the language deficit manifest by the KE family members and the gene FOXP2. Although FOXP2 is not the gene responsible for language,[11] this discovery brought many linguists and scientists together to interpret this data, renewing the interest of biolinguistics.

Although many linguists have differing opinions when it comes to the history of biolinguistics, Chomsky believes that its history was simply that of transformational grammar. While Professor Anna Maria Di Sciullo claims that the interdisciplinary research of biology and linguistics in the 1950s-1960s led to the rise of biolinguistics. Furthermore, Jenkins believes that biolinguistics was the outcome of transformational grammarians studying human linguistic and biological mechanisms. On the other hand, linguists Martin Nowak and Charles Yang argue that biolinguistics, originating in the 1970s, is distinct transformational grammar; rather a new branch of the linguistics-biology research paradigm initiated by transformational grammar.[12]

Developments

[edit]Chomsky's Theories

[edit]Universal Grammar and Generative Grammar

[edit]

In Aspects of the theory of Syntax, Chomsky proposed that languages are the product of a biologically determined capacity present in all humans, located in the brain. He addresses three core questions of biolinguistics: what constitutes the knowledge of language, how is knowledge acquired, how is the knowledge put to use? A great deal of ours must be innate, supporting his claim with the fact that speakers are capable of producing and understanding novel sentences without explicit instructions. Chomsky proposed that the form of the grammar may emerge from the mental structure afforded by the human brain and argued that formal grammatical categories such as nouns, verbs, and adjectives do not exist. The linguistic theory of generative grammar thereby proposes that sentences are generated by a subconscious set of procedures which are part of an individual's cognitive ability. These procedures are modeled through a set of formal grammatical rules which are thought to generate sentences in a language.[13]

Chomsky focuses on the mind of the language learner or user and proposed that internal properties of the language faculty are closely linked to the physical biology of humans. He further introduced the idea of a Universal Grammar (UG) theorized to be inherent to all human beings. From the view of Biolinguistic approach, the process of language acquisition would be fast and smooth because humans naturally obtain the fundamental perceptions toward Universal Grammar, which is opposite to the usage-based approach.[14] UG refers to the initial state of the faculty of language; a biologically innate organ that helps the learner make sense of the data and build up an internal grammar.[15] The theory suggests that all human languages are subject to universal principles or parameters that allow for different choices (values). It also contends that humans possess generative grammar, which is hard-wired into the human brain in some ways and makes it possible for young children to do the rapid and universal acquisition of speech.[16] Elements of linguistic variation then determine the growth of language in the individual, and variation is the result of experience, given the genetic endowment and independent principles reducing complexity. Chomsky's work is often recognized as the weak perspective of biolinguistics as it does not pull from other fields of study outside of linguistics.[17]

Modularity Hypothesis

[edit]According to Chomsky, the human's brains consist of various sections which possess their individual functions, such as the language faculty, visual recognition.[14]

Language Acquisition Device

[edit]The acquisition of language is a universal feat and it is believed we are all born with an innate structure initially proposed by Chomsky in the 1960s. The Language Acquisition Device (LAD) was presented as an innate structure in humans which enabled language learning. Individuals are thought to be "wired" with universal grammar rules enabling them to understand and evaluate complex syntactic structures. Proponents of the LAD often quote the argument of the poverty of negative stimulus, suggesting that children rely on the LAD to develop their knowledge of a language despite not being exposed to a rich linguistic environment. Later, Chomsky exchanged this notion instead for that of Universal Grammar, providing evidence for a biological basis of language.

Minimalist Program

[edit]The Minimalist Program (MP) was introduced by Chomsky in 1993, and it focuses on the parallel between language and the design of natural concepts. Those invested in the Minimalist Program are interested in the physics and mathematics of language and its parallels with our natural world. For example, Piatelli-Palmarini[18] studied the isomorphic relationship between the Minimalist Program and Quantum Field Theory. The Minimalist Program aims to figure out how much of the Principles and Parameters model can be taken as a result of the hypothetical optimal and computationally efficient design of the human language faculty and more developed versions of the Principles and Parameters approach in turn provide technical principles from which the minimalist program can be seen to follow.[19] The program further aims to develop ideas involving the economy of derivation and economy of representation, which had started to become an independent theory in the early 1990s, but were then still considered as peripherals of transformational grammar.[20]

Merge

[edit]The Merge operation is used by Chomsky to explain the structure of syntax trees within the Minimalist program. Merge itself is a process which provides the basis of phrasal formation as a result of taking two elements within a phrase and combining them[21] In A.M. Di Sciullo & D. Isac's The Asymmetry of Merge (2008), they highlight the two key bases of Merge by Chomsky;

- Merge is binary

- Merge is recursive

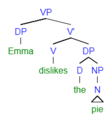

In order to understand this, take the following sentence: Emma dislikes the pie

This phrase can be broken down into its lexical items:

[VP [DP Emma] [V' [V dislikes] [DP [D the] [NP pie]]]]

The above phrasal representation allows for an understanding of each lexical item. In order to build a tree using Merge, using bottom-up formation the two final elements of the phrase are selected and then combined to form a new element on the tree. In image a) you can see that the determiner the and the Noun Phrase pie are both selected. Through the process of Merge, the new formed element on the tree is the determiner Phrase (DP) which holds, the pie, which is visible in b).

-

a) Selection of the final two element of the phrase

-

b) The two selected elements are then "merged" and they produce one new constituent, known as the Determiner Phrase (DP)

-

c) Selection of DP the pie with V dislikes

-

d) Merge operation has occurred, yielded new element on tree, V' (V-bar)

-

e) Selection of V' dislikes the pie and DP subject Emma

-

f) Merge operation has undergone, yielded new element on tree; VP

Core components

[edit]In a minimalist approach, there are three core components of the language faculty proposed: Sensory-Motor system (SM), Conceptual-Intentional system (CI), and Narrow Syntax (NS).[22] SM includes biological requisites for language production and perception, such as articulatory organs, and CI meets the biological requirements related to inference, interpretation, and reasoning, those involved in other cognitive functions. As SM and CI are finite, the main function of NS is to make it possible to produce infinite numbers of sound-meaning pairs.

Relevance of Natural Law

[edit]It is possible that the core principles of The Faculty of Language be correlated to natural laws (such as for example, the Fibonacci sequence— an array of numbers where each consecutive number is a sum of the two that precede it, see for example the discussion Uriagereka 1997 and Carnie and Medeiros 2005).[23] According to the hypothesis being developed, the essential properties of language arise from nature itself: the efficient growth requirement appears everywhere, from the pattern of petals in flowers, leaf arrangements in trees and the spirals of a seashell to the structure of DNA and proportions of human head and body. Natural Law in this case would provide insight on concepts such as binary branching in syntactic trees and well as the Merge operation. This would translate to thinking it in terms of taking two elements on a syntax tree and such that their sum yields another element that falls below on the given syntax tree (Refer to trees above in Minimalist Program). By adhering to this sum of two elements that precede it, provides support for binary structures. Furthermore, the possibility of ternary branching would deviate from the Fibonacci sequence and consequently would not hold as strong support to the relevance of Natural Law in syntax.[24]

Biolinguistics: Challenging the Usage-Based Approach

[edit]As mentioned above, biolinguistics challenges the idea that the acquisition of language is a result of behavior based learning. This alternative approach the biolinguistics challenges is known as the usage-based (UB) approach. UB supports that idea that knowledge of human language is acquired via exposure and usage.[25] One of the primary issues that is highlighted when arguing against the Usage-Based approach, is that UB fails to address the issue of poverty of stimulus,[26] whereas biolinguistics addresses this by way of the Language Acquisition Device.[27]

Lenneberg and the Role of Genes

[edit]Another major contributor to the field is Eric Lenneberg. In is book Biological Foundation of Languages,[2] Lenneberg (1967) suggests that different aspects of human biology that putatively contribute to language more than genes at play. This integration of other fields to explain language is recognized as the strong view in biolinguistics[28] While they are obviously essential, and while genomes are associated with specific organisms, genes do not store traits (or "faculties") in the way that linguists—including Chomskyans—sometimes seem to imply.

Contrary to the concept of the existence of a language faculty as suggested by Chomsky, Lenneberg argues that while there are specific regions and networks crucially involved in the production of language, there is no single region to which language capacity is confined and that speech, as well as language, is not confined to the cerebral cortex. Lenneberg considered language as a species-specific mental organ with significant biological properties. He suggested that this organ grows in the mind/brain of a child in the same way that other biological organs grow, showing that the child's path to language displays the hallmark of biological growth. According to Lenneberg, genetic mechanisms plays an important role in the development of an individual's behavior and is characterized by two aspects:

- The acknowledgement of an indirect relationship between genes and traits, and;

- The rejection of the existence of 'special' genes for language, that is, the rejection of the need for a specifically linguistic genotype;

Based on this, Lenneberg goes on further to claim that no kind of functional principle could be stored in an individual's genes, rejecting the idea that there exist genes for specific traits, including language. In other words, that genes can contain traits. He then proposed that the way in which genes influence the general patterns of structure and function is by means of their action upon ontogenesis of genes as a causal agent which is individually the direct and unique responsible for a specific phenotype, criticizing prior hypothesis by Charles Goodwin.[29]

Recent Developments

[edit]Generative Procedure Accepted At Present & Its Developments

[edit]In biolinguistics, language is recognised to be based on recursive generative procedure that retrieves words from the lexicon and applies them repeatedly to output phrases.[30][31] This generative procedure was hypothesised to be a result of a minor brain mutation due to evidence that word ordering is limited to externalisation and plays no role in core syntax or semantics. Thus, different lines of inquiry to explain this were explored.

The most commonly accepted line of inquiry to explain this is Noam Chomsky's minimalist approach to syntactic representations. In 2016, Chomsky and Berwick defined the minimalist program under the Strong Minimalist Thesis in their book Why Only Us by saying that language is mandated by efficient computations and, thus, keeps to the simplest recursive operations.[11] The main basic operation in the minimalist program is merge. Under merge there are two ways in which larger expressions can be constructed: externally and internally. Lexical items that are merged externally build argument representations with disjoint constituents. The internal merge creates constituent structures where one is a part of another. This induces displacement, the capacity to pronounce phrases in one position, but interpret them elsewhere.

Recent investigations of displacement concur to a slight rewiring in cortical brain regions that could have occurred historically and perpetuated generative grammar. Upkeeping this line of thought, in 2009, Ramus and Fishers speculated that a single gene could create a signalling molecule to facilitate new brain connections or a new area of the brain altogether via prenatally defined brain regions. This would result in information processing greatly important to language, as we know it. The spread of this advantage trait could be responsible for secondary externalisation and the interaction we engage in.[11] If this holds, then the objective of biolinguistics is to find out as much as we can about the principles underlying mental recursion.

Human versus Animal Communication

[edit]Compared to other topics in linguistics where data can be displayed with evidence cross-linguistically, due to the nature of biolinguistics, and that it is applies to the entirety of linguistics rather than just a specific subsection, examining other species can assist in providing data. Although animals do not have the same linguistic competencies as humans, it is assumed that they can provide evidence for some linguistic competence.

The relatively new science of evo-devo that suggests everyone is a common descendant from a single tree has opened pathways into gene and biochemical study. One way in which this manifested within biolinguistics is through the suggestion of a common language gene, namely FOXP2. Though this gene is subject to debate, there have been interesting recent discoveries made concerning it and the part it plays in the secondary externalization process. Recent studies of birds and mice resulted in an emerging consensus that FOXP2 is not a blueprint for internal syntax nor the narrow faculty of language, but rather makes up the regulatory machinery pertaining to the process of externalization. It has been found to assist sequencing sound or gesture one after the next, hence implying that FOXP2 helps transfer knowledge from declarative to procedural memory. Therefore, FOXP2 has been discovered to be an aid in formulating a linguistic input-output system that runs smoothly.[11]

The Integration Hypothesis

[edit]According to the Integration Hypothesis, human language is the combination of the Expressive (E) component and the Lexical (L) component. At the level of words, the L component contains the concept and meaning that we want to convey. The E component contains grammatical information and inflection. For phrases, we often see an alternation between the two components. In sentences, the E component is responsible for providing the shape and structure to the base-level lexical words, while these lexical items and their corresponding meanings found in the lexicon make up the L component.[32] This has consequences for our understanding of: (i) the origins of the E and L components found in bird and monkey communication systems; (ii) the rapid emergence of human language as related to words; (iii) evidence of hierarchical structure within compound words; (iv) the role of phrases in the detection of the structure building operation Merge; and (v) the application of E and L components to sentences. In this way, we see that the Integration Hypothesis can be applied to all levels of language: the word, phrasal, and sentence level.

The Origins of the E and L systems in Bird and Monkey Communication Systems

[edit]Through the application of the Integration Hypothesis, it can be seen that the interaction between the E and L components enables language structure (E component) and lexical items (L component) to operate simultaneously within one form of complex communication: human language. However, these two components are thought to have emerged from two pre-existing, separate, communication systems in the animal world.[32] The communication systems of birds[33] and monkeys[34] have been found to be antecedents to human language. The bird song communication system is made up entirely of the E component while the alarm call system used by monkeys is made up of the L component. Human language is thought to be the byproduct of these two separate systems found in birds and monkeys, due to parallels between human communication and these two animal communication systems.

The communication systems of songbirds is commonly described as a system that is based on syntactic operations. Specifically, bird song enables the systematic combination of sound elements in order to string together a song. Likewise, human languages also operate syntactically through the combination of words, which are calculated systematically. While the mechanics of bird song thrives off of syntax, it appears as though the notes, syllables, and motifs that are combined in order to elicit the different songs may not necessarily contain any meaning.[35] The communication system of songbirds' also lacks a lexicon[36] that contains a set of any sort of meaning-to-referent pairs. Essentially, this means that an individual sound produced by a songbird does not have meaning associated with it, the way a word does in human language. Bird song is capable of being structured, but it is not capable of carrying meaning. In this way, the prominence of syntax and the absence of lexical meaning presents bird song as a strong candidate for being a simplified antecedent of the E component that is found in human language, as this component also lacks lexical information. While birds that use bird song can rely on just this E component to communicate, human utterances require lexical meaning in addition to structural operations a part of the E component, as human language is unable to operate with just syntactic structure or structural function words alone. This is evident as human communication does in fact consist of a lexicon, and humans produce combined sequences of words that are meaningful, best known as sentences. This suggests that part of human language must have been adapted from another animal's communication system in order for the L component to arise .

A well known study by Seyfarth et al.[34] investigated the referential nature of the alarm calls of vervet monkeys. These monkeys have three set alarm calls, with each call directly mapping on to one of the following referents: a leopard, an eagle, or a snake. Each call is used to warn other monkeys about the presence of one of these three predators in their immediate environmental surroundings. The main idea is that the alarm call contains lexical information that can be used to represent the referent that is being referred to. Essentially, the entire communication system used by monkeys is made up of the L system such that only these lexical-based calls are needed to effectively communicate. This is similar to the L component found in human language in which content words are used to refer to a referent in the real world, containing the relevant lexical information. The L component in human language is, however, a much more complex variant of the L component found in vervet monkey communication systems: humans use many more than just 3 word-forms to communicate. While vervet monkeys are capable of communicating solely with the L component, humans are not, as communication with just content words does not output well-formed grammatical sentences. It is for this reason that the L component is combined with the E component responsible for syntactic structure in order to output human language.

The Rapid Emergence of Human Language

[edit]As traces of the E and L components have been found in nature, the integration hypothesis asserts that these two systems existed before human language, and that it was the combination of these two pre-existing systems that rapidly led to the emergence of human language.[32] The Integration Hypothesis posits that it was the grammatical operator, Merge, that triggered the combination of the E and L systems to create human language.[37] In this view, language emerged rapidly and fully formed, already containing syntactical structure. This is in contrast to the Gradualist Approach, where it is thought that early forms of language did not have syntax. Instead, supporters of the Gradualist Approach believe language slowly progressed through a series of stages as a result of a simple combinatory operator that generated flat structures. Beginning with a one-word stage, then a two-word stage, then a three-word stage, etc., language is thought to have developed hierarchy in later stages.[37]

In the article, The precedence of syntax in the rapid emergence of human language in evolution as defined by the integration hypothesis,[37] Nóbrega & Miyagawa outline the Integration Hypothesis as it applies to words. To explain the Integration Hypothesis as it relates to words, everyone must first agree on the definition of a 'word'. While this seems fairly straightforward in English, this is not the case for other languages. To allow for cross-linguistic discussion, the idea of a "root" is used instead, where a "root" encapsulates a concept at the most basic level. In order to differentiate between "roots" and "words", it must be noted that "roots" are completely devoid of any information relating to grammatical category or inflection. Therefore, "roots" form the lexical component of the Integration Hypothesis while grammatical category (noun, verb, adjective) and inflectional properties (e.g. case, number, tense, etc.) form the expressive component.

Thus, at the most basic level for the formation of a "word" in human language, there must be a combination of the L component with the E component. When we know a "word" in a language, we must know both components: the concept that it relates to as well as its grammatical category and inflection. The former is the L component; the latter is the E component. The Integration Hypothesis suggests that it was the grammatical operator Merge that triggered this combination, occurring when one linguistic object (L layer) satisfies the grammatical feature of another linguistic object (E layer). This means that L components are not expected to directly combine with each other.

Based on this analysis, it is believed that human language emerged in a single step. Before this rapid emergence, the L component, "roots", existed individually, lacked grammatical features, and were not combined with each other. However, once this was combined with the E component, it led to the emergence of human language, with all the necessary characteristics. Hierarchical structures of syntax are already present within words because of the integration of these two layers. This pattern is continued when words are combined with each other to make phrases, as well as when phrases are combined into sentences. Therefore, the Integration Hypothesis posits that once these two systems were integrated, human language appeared fully formed, and did not require additional stages.

Evidence of Hierarchical Structure Within Compound Words

[edit]

Compound words are a special point of interest with the Integration Hypothesis, as they are further evidence that words contain internal structure. The Integration Hypothesis, analyzes compound words differently compared to previous gradualist theories of language development. As previously mentioned, in the Gradualist Approach, compound words are thought of as part of a proto-syntax stage to the human language. In this proposal of a lexical protolanguage, compounds are developed in the second stage through a combination of single words by a rudimentary recursive n-ary operation that generates flat structures.[38] However, the Integration Hypothesis challenges this belief, claiming that there is evidence to suggest that words are internally complex. In English for example, the word 'unlockable' is ambiguous because of two possible structures within. It can either mean something that is able to be unlocked (unlock-able), or it can mean something that is not lockable (un-lockable). This ambiguity points to two possible hierarchical structures within the word: it cannot have the flat structure posited by the Gradualist Approach. With this evidence, supporters of the Integration Hypothesis argue that these hierarchical structures in words are formed by Merge, where the L component and E component are combined. Thus, Merge is responsible for the formation of compound words and phrases. This discovery leads to the hypothesis that words, compounds, and all linguistic objects of the human language are derived from this integration system, and provides contradictory evidence to the theory of an existence of a protolanguage.[37]

In the view of compounds as "living fossils", Jackendoff[39] alleges that the basic structure of compounds does not provide enough information to offer semantic interpretation. Hence, the semantic interpretation must come from pragmatics. However, Nórega and Miyagawa[37] noticed that this claim of dependency on pragmatics is not a property of compound words that is demonstrated in all languages. The example provided by Nórega and Miyagawa is the comparison between English (a Germanic language) and Brazilian Portuguese (a Romance language). English compound nouns can offer a variety of semantic interpretations. For example, the compound noun "car man" can have several possible understandings such as: a man who sells cars, a man who's passionate about cars, a man who repairs cars, a man who drives cars, etc. In comparison, the Brazilian Portuguese compound noun "peixe-espada" translated as "sword fish", only has one understanding of a fish that resembles a sword.[37] Consequently, when looking at the semantic interpretations available of compound words between Germanic languages and Romance languages, the Romance languages have highly restrictive meanings. This finding presents evidence that in fact, compounds contain more sophisticated internal structures than previously thought. Moreover, Nórega and Miyagawa provide further evidence to counteract the claim of a protolanguage through examining exocentric VN compounds. As defined, one of the key components to Merge is the property of being recursive. Therefore, by observing recursion within exocentric VN compounds of Romance languages, this proves that there must be an existence of an internal hierarchical structure which Merge is responsible for combining. In the data collected by Nórega and Miyagawa,[37] they observe recursion occurring in several occasions within different languages. This happens in Catalan, Italian, and Brazilian Portuguese where a new VN compound is created when a nominal exocentric VN compound is the complement of a verb. For example, referring to the Catalan translation of "windshield wipers", [neteja[para-brises]] lit. clean-stop-breeze, we can identify recursion because [para-brises] is the complement of [neteja]. Additionally, we can also note the occurrence of recursion when the noun of a VN compound contains a list of complements. For example, referring to the Italian translation of "rings, earrings, or small jewels holder", [porta[anelli, orecchini o piccoli monili]] lit. carry-rings-earrings-or-small-jewels, there is recursion because of the string of complements [anelli, orecchini o piccoli monili] containing the noun to the verb [porta].

The common claim that compounds are fossils of language often complements the argument that they contain a flat, linear structure.[40] However, Di Sciullo provided experimental evidence to dispute this.[40] With the knowledge that there is asymmetry in the internal structure of exocentric compounds, she uses the experimental results to show that hierarchical complexity effects are observed from processing of NV compounds in English. In her experiment, sentences containing object-verb compounds and sentences containing adjunct-verb compounds were presented to English speakers, who then assessed the acceptability of these sentences. Di Sciullo has noted that previous works have determined adjunct-verb compounds to have more complex structure than object-verb compounds because adjunct-verb compounds require merge to occur several times.[40] In her experiment, there were 10 English speaking participants who evaluated 60 English sentences. The results revealed that the adjunct-verb compounds had a lower acceptability rate and the object-verb compounds had a higher acceptability rate. In other words, the sentences containing the adjunct-verb compounds were viewed as more "ill-formed" than the sentences containing the object-verb compounds. The findings demonstrated that the human brain is sensitive to the internal structures that these compounds contain. Since adjunct-verb compounds contain complex hierarchical structures from the recursive application of Merge, these words are more difficult to decipher and analyze than the object-verb compounds which encompass simpler hierarchical structures. This is evidence that compounds could not have been fossils of a protolanguage without syntax due to their complex internal hierarchical structures.

Interactions Between E and L Components in Phrases of Human Language

[edit]As previously mentioned, human language is interesting because it necessarily requires elements from both E and L systems - neither can stand alone. Lexical items, or what the Integration Hypothesis refers to as 'roots', are necessary as they refer to things in the world around us. Expression items, that convey information about category or inflection (number, tense, case etc.) are also required to shape the meanings of the roots.

It becomes more clear that neither of these two systems can exist alone with regards to human language when we look at the phenomenon of 'labeling'. This phenomenon refers to how we classify the grammatical category of phrases, where the grammatical category of the phrase is dependent on the grammatical category of one of the words within the phrase, called the head. For example, in the phrase "buy the books", the verb "buy" is the head, and we call the entire phrase a verb-phrase. There is also a smaller phrase within this verb-phrase, a determiner phrase, "the books" because of the determiner "the". What makes this phenomenon interesting is that it allows for hierarchical structure within phrases. This has implications on how we combine words to form phrases and eventually sentences.[41]

This labelling phenomenon has limitations however. Some labels can combine and others cannot. For example, two lexical structure labels cannot directly combine. The two nouns, "Lucy" and "dress" cannot directly be combined. Likewise, neither can the noun "pencil" be merged with the adjective "short", nor can the verbs, "want" and "drink" cannot be merged without anything in between. As represented by the schematic below, all of these examples are impossible lexical structures. This shows that there is a limitation where lexical categories can only be one layer deep. However, these limitations can be overcome with the insertion of an expression layer in between. For example, to combine "John" and "book", adding a determiner such as "-'s" makes this a possible combination.[41]

Another limitation regards the recursive nature of the expressive layer. While it is true that CP and TP can come together to form hierarchical structure, this CP TP structure cannot repeat on top of itself: it is only a single layer deep. This restriction is common to both the expressive layer in humans, but also in birdsong. This similarity strengthens the tie between the pre-existing E system posited to have originated in birdsong and the E layers found in human language.[41]

Due to these limitations in each system, where both lexical and expressive categories can only be one layer deep, the recursive and unbounded hierarchical structure of human language is surprising. The Integration hypothesis posits that it is the combination of these two types of layers that results in such a rich hierarchical structure. The alternation between L layers and E layers is what allows human language to reach an arbitrary depth of layers. For example, in the phrase "Eat the cake that Mary baked", the tree structure shows an alternation between L and E layers. This can easily be described by two phrase rules: (i) LP → L EP and (ii) EP → E LP. The recursion that is possible is plainly seen by transforming these phrase rules into bracket notation. The LP in (i) can be written as [L EP]. Then, adding an E layer to this LP to create an EP would result in [E [L EP]]. After, a more complex LP could be obtained by adding an L layer to the EP, resulting in [L [E [L EP]]]. This can continue forever and would result in the recognizable deep structures found in human language.[41]

The Operation of E and L Components in the Syntax of Sentences

[edit]The E and L components can be used to explain the syntactic structures that make up sentences in human languages. The first component, the L component, contains content words.[41] This component is responsible for carrying the lexical information that relays the underlying meaning behind a sentence. However, combinations consisting solely of L component content words do not result in grammatical sentences. This issue is resolved through the interaction of the L component with the E component. The E component is made up of function words: words that are responsible for inserting syntactic information about the syntactic categories of L component words, as well as morphosyntactic information about clause-typing, question, number, case and focus.[37] Since these added elements complement the content words in the L component, the E component can be thought of as being applied to the L component. Considering that the L component is solely composed of lexical information and the E component is solely composed of syntactic information, they do exist as two independent systems. However, for the rise of such a complex system as human language, the two systems are necessarily reliant on each other. This aligns with Chomsky's proposal of duality of semantics which suggests that human language is composed of these two distinct components.[42] In this way, it is logical as to why the convergence of these two components was necessary in order to enable the functionality of human language as we know it today.

Looking at the following example taken from the article The integration hypothesis of human language evolution and the nature of contemporary languages by Miyagawa et al.,[32] each word can be identified as either being either an L component or an E component in the sentence: Did John eat pizza?

The L component words of this sentence are the content words John, eat, and pizza. Each word only contains lexical information that directly contributes to the meaning of the sentence. The L component is often referred to as the base or inner component, due to the inwards positioning of this constituent in a phrase structure tree. It is evident that the string of words 'John eat pizza' does not form a grammatically well-formed sentence in English, which suggests that E component words are necessary to syntactically shape and structure this string of words. The E component is typically referred to as the outer component that shapes the inner L component as these elements originate in a position that orbits around the L component in a phrase structure tree. In this example, the E component function word that is implemented is did. By inserting this word, two types of structures are added to the expression: tense and clause typing. The word did is a word that is used to inquire about something that happened in the past, meaning that it adds the structure of the past tense to this expression. In this example, this does not explicitly change the form of the verb, as the verb eat in the past tense still surfaces as eat without any additional tense markers in this particular environment. Instead the tense slot can be thought of as being filled by a null symbol (∅) as this past tense form does not have any phonological content. Although covert, this null tense marker is an important contribution from the E component word did. Tense aside, clause typing is also conveyed through the E component. It is interesting that this function word did surfaces in the sentence initial position because in English, this indicates that the string of words will manifest as a question. The word did determines that the structure of the clause type for this sentence will be in the form of an interrogative question, specifically a yes–no question. Overall, the integration of the E component with the L component forms the well-formed sentence, Did John eat pizza?, and accounts for all other utterances found in human languages.

Critiques

[edit]Alternative Theoretical Approaches

Stemming from the usage-based approach, the Competition Model, developed by Elizabeth Bates and Brian MacWhinney, views language acquisition as consisting of a series of competitive cognitive processes that act upon a linguistic signal. This suggests that language development depends on learning and detecting linguistic cues with the use of competing general cognitive mechanisms rather than innate, language-specific mechanisms.

From the side of biosemiotics, there has been a recent claim that meaning-making begins far before the emergence of human language. This meaning-making consists of internal and external cognitive processes. Thus, it holds that such process organisation could not have only given a rise to language alone. According to this perspective all living things possess these processes, regardless of how wide the variation, as a posed to species-specific.[43]

Over-Emphasised Weak Stream Focus

When talking about biolinguistics there are two senses that are adopted to the term: strong and weak biolinguistics. The weak is founded on theoretical linguistics that is generativist in persuasion. On the other hand, the strong stream goes beyond the commonly explored theoretical linguistics, oriented towards biology, as well as other relevant fields of study. Since the early emergence of biolinguistics to its present day, there has been a focused mainly on the weak stream, seeing little difference between the inquiry into generative linguistics and the biological nature of language as well as heavily relying on the Chomskyan origin of the term.[44]

As expressed by research professor and linguist Cedric Boeckx, it is a prevalent opinion that biolinguistics need to focus on biology as to give substance to the linguistic theorizing this field has engaged in. Particular criticisms mentioned include a lack of distinction between generative linguistics and biolinguistics, lack of discoveries pertaining to properties of grammar in the context of biology, and lack of recognition for the importance broader mechanisms, such as biological non-linguistic properties. After all, it is only an advantage to label propensity for language as biological if such insight is used towards a research.[44]

David Poeppel, a neuroscientist and linguist, has additionally noted that if neuroscience and linguistics are done wrong, there is a risk of "inter-disciplinary cross-sterilization", arguing that there is a Granularity Mismatch Problem. Due to this different levels of representations used in linguistics and neural science lead to vague metaphors linking brain structures to linguistic components. Poeppel and Embick also introduce the Ontological Incommensurability Problem, where computational processes described in linguistic theory cannot be restored to neural computational processes.[45]

A recent critique of biolinguistics and 'biologism' in language sciences in general has been developed by Prakash Mondal who shows that there are inconsistencies and categorical mismatches in any putative bridging constraints that purport to relate neurobiological structures and processes to the logical structures of language that have a cognitive-representational character.[46] [47]

Other Relevant Fields

[edit]| Topic | Description | Relevance to Biolinguistics |

|---|---|---|

| Neurolinguistics | The study of how language is represented in the brain; closely tied to psycholinguistics, language acquisition, and the localisation of the language process. | Physiological mechanisms by which the brain processes in formation related to language. |

| Language Acquisition | The way in which humans learn to perceive, produce and comprehend language;[48] guided by Universal Grammar proposed by Chomsky; children's ability to learn properties of grammar from impoverished linguistic data.[49] | Language growth and maturation in individuals; evolutionary processes that led to the emergence of language; poverty of the stimulus.[50][9] |

| Linguistic Typology | The analysis, comparison, and classification of languages according to their common structural features;[51] | Identifies similarities and differences in the languages of the world; suggests languages may not be completely random. |

| Syntax | The rules that govern the grammatical organization of words and phrases. | Generative grammar; poverty of the stimulus; structure dependency whereby a sentence is influenced its structure and not just the order of words.[52] |

| Artificial Grammar Learning | The intersection between cognitive psychology and linguistics | Humans' cognitive processes and pattern-detection in a language learning context; how humans learn and interpret grammar. |

Some Researchers in Biolinguistics (by alphabetical order of last name)

[edit]- Calixto Agüero-Bautista, Université du Québec à Trois-Rivières

- Michael Arbib, University of Southern California

- Antonio-Benitez Burraco, University of Seville

- Derek Bickerton, University of Hawaii

- Cedric Boeckx, Catalan institute for Advanced Studies

- Andrew Carnie, University of Arizona

- Anna Maria Di Sciullo, University of Quebec at Montreal

- Ray C. Dougherty, New York University (NYU)

- W. Tecumseh Fitch, University of Vienna

- Dieter Hillert, San Diego State University/UC San Diego

- Philip Lieberman, Brown University

- Alec Marantz, NYU/MIT

- Massimo Piattelli-Palmarini, University of Arizona

- David Poeppel, NYU

- Charles Reiss, Concordia University

See also

[edit]- Origin of language

- Origin of speech

- Universal Grammar

- Generative Grammar

- Minimalist Program

- Merge

- Biosemiotics

- Evolutionary psychology of language

- Neurolinguistics

[53] [54] [55] [41] [56] [32] [42] [57]

References

[edit]- ^ DEMIREZEN, Mehmet (1988). "Behaviorist theory and language learning". Hacettepe üniversitesi Eğitim Fakültesi Öğretim üyesi. https://dergipark.org.tr/tr/download/article-file/88422

- ^ a b Lenneberg, E.H. (1967). Biological foundations of language. New York Wiley.

- ^ Martins, Pedro Tiago; Boeckx, Cedric (27 August 2016). "What we talk about when we talk about biolinguistics". Linguistics Vanguard. 2 (1). doi:10.1515/lingvan-2016-0007. S2CID 131936047.

- ^ Radick, Gregory (November 2002). "Darwin on Language and Selection". Selection. 3 (1): 7–12. doi:10.1556/Select.3.2002.1.2. S2CID 36616051.

- ^ Trabant, Jürgen (2001). New essays on the origin of language. De Gruyter. ISBN 9783110849080.

- ^ Plotkin, Henry (25 April 1997). Darwin Machines and the Nature of Knowledge. Harvard University Press. ISBN 9780674192812.

- ^ Boeckx, Cedric; Piattelli-Palmarini, Massimo (2005). "Language as a natural object, linguistics as a natural science. Linguistic Review 22: 447–466" (PDF). Archived from the original (PDF) on 2010-07-23. Retrieved 2014-09-15.

- ^ "Origins and evolution of language and speech". Journal of Human Evolution. 8 (2): 309–310. February 1979. doi:10.1016/0047-2484(79)90104-0. ISSN 0047-2484.

- ^ a b Jenkins, Jennifer (1997). "Biolinguistics-structure, development and evolution of language". Web Journal of Formal, Computational and Cognitive Linguistics. CiteSeerX 10.1.1.35.1374.

- ^ Di Sciullo, Anna Maria; Boeckx, Cedric (2011). The Biolinguistic Enterprise: New Perspectives on the Evolution and Nature of the Human Language Faculty Volume 1 of Oxford Studies in Biolinguistics. Oxford University Press, 2011. ISBN 9780199553273.

- ^ a b c d Why Only Us. The MIT Press. 2016. doi:10.7551/mitpress/10684.001.0001. ISBN 9780262333351.

- ^ Wu, Jieqiong (15 January 2014). An Overview of Researches on Biolinguistics. Canadian Social Science. pp. 171–176. CiteSeerX 10.1.1.820.7700.

- ^ Freidin, Robert (5 March 2012). Generative Grammar: Theory and its History (1st ed.). Routledge Leading Linguists. ISBN 9780415541336.

- ^ a b Crain, Stephen; Koring, Loes; Thornton, Rosalind (2017-10-01). "Language acquisition from a biolinguistic perspective". Neuroscience & Biobehavioral Reviews. The Biology of Language. 81 (Pt B): 120–149. doi:10.1016/j.neubiorev.2016.09.004. ISSN 0149-7634. PMID 27633153. S2CID 505901.

- ^ Călinescu, Mihaela (1 January 2012). "Chomsky's Biolinguistic Approach to Mind and Language". Linguistic & Philosophical Investigations. 11: 91–96.

- ^ Logan, Robert K (2007). The extended mind : the emergence of language, the human mind, and culture. Toronto : University of Toronto Press. ISBN 9780802093035.

- ^ Pleyer, Michael; Hartmann, Stefan (2019-11-14). "Constructing a Consensus on Language Evolution? Convergences and Differences Between Biolinguistic and Usage-Based Approaches". Frontiers in Psychology. 10: 2537. doi:10.3389/fpsyg.2019.02537. ISSN 1664-1078. PMC 6868443. PMID 31803099.

- ^ "Piatelli-Palmarini, M., & Vitello, G. (2017). "Quantum field theory and the linguistic Minimalist Program: a remarkable isomorphism"". Retrieved 2019-04-10.

- ^ Gert Webelhuth. 1995. Government and Binding Theory and the Minimalist Program: Principles and Parameters in Syntactic Theory. Wiley-Blackwell; Uriagereka, Juan. 1998. Rhyme and Reason. An Introduction to Minimalist Syntax. Cambridge, Massachusetts: MIT Press

- ^ For a full description of the checking mechanism see Adger, David. 2003. Core Syntax. A Minimalist Approach. Oxford: Oxford University Press; and also Carnie, Andrew. 2006. Syntax: A Generative Introduction, 2nd Edition. Blackwell Publishers

- ^ Sciullo, Anna Maria Di; Isac, Daniela (2008-09-30). "The Asymmetry of Merge". Biolinguistics. 2 (4): 260–290. doi:10.5964/bioling.8661. ISSN 1450-3417. S2CID 57556158.

- ^ Di Sciullo, Anna Maria; et al. (Winter 2010). "The Biological Nature of Human Language". Biolinguistics. 4. S2CID 141815607.

- ^ Soschen, Alona (2006). "Natural Law: The Dynamics of Syntactic Representations in MP" (PDF). Archived from the original (PDF) on 2007-02-21. Retrieved 2007-02-18.

- ^ Soschen, Alona (2008-03-25). "On the Nature of Syntax". Biolinguistics. 2 (2–3): 196–224. doi:10.5964/bioling.8641. ISSN 1450-3417. S2CID 58891675.

- ^ Ibbotson, Paul (2013). "The Scope of Usage-Based Theory". Frontiers in Psychology. 4: 255. doi:10.3389/fpsyg.2013.00255. ISSN 1664-1078. PMC 3647106. PMID 23658552.

- ^ Patrick, Dewey. "Evolution of Language and Mind" (PDF). Bibliotex. Retrieved February 3, 2024.

- ^ Crain, Stephen (September 2016). "Language Acquisition from a Biolinguistic Perspective". Neuroscience & Biobehavioral Reviews. 81. 2016Neuroscience & Biobehavioral Reviews 81(Pt B): 120–149. doi:10.1016/j.neubiorev.2016.09.004. Retrieved February 3, 2024.

- ^ Boeckx, Cedric; Grohmann, Kleanthes K. (2007). "The BIOLINGUISTICS Manifesto". Biolinguistics. 1: 001–008. doi:10.5964/bioling.8583. ISSN 1450-3417. S2CID 170315284.

- ^ Boeckx, Cedric; Longa, Victor M. (2011). "Lenneberg's Views on Language Development and Evolution and Their Relevance for Modern Biolinguistics". Biolinguistics. 5 (3): 254–273. doi:10.5964/bioling.8851. S2CID 17101093. Retrieved 10 April 2019.

- ^ Berwick, Robert C.; Chomsky, Noam (March 2016). "Biolinguistics Evolving". Oxford University Press. Retrieved February 25, 2024.

- ^ "Biolinguistics Evolving" (PDF). Michigan State University. Retrieved February 25, 2024.

- ^ a b c d e Miyagawa, Shigeru; Ojima, Shiro; Berwick, Robert C.; Okanoya, Kazuo (June 9, 2014). "The integration hypothesis of human language evolution and the nature of contemporary languages". Frontiers in Psychology. 5: 564. doi:10.3389/fpsyg.2014.00564. PMC 4048833. PMID 24936195.

- ^ Berwick, Robert C.; Okanoya, Kazuo; Beckers, Gabriel J.L.; Bolhuis, Johan J. (2011). "Songs to syntax: the linguistics of birdsong". Trends in Cognitive Sciences. 15 (3): 113–21. doi:10.1016/j.tics.2011.01.002. PMID 21296608. S2CID 17963919. Retrieved 2020-11-30.

- ^ a b Seyfarth, Robert M.; Cheney, Dorthy L.; Marler, Peter (1980). "Monkey Responses to Three Different Alarm Calls: Evidence of Predator Classification and Semantic Communication". Science. 210 (4471): 801–803. Bibcode:1980Sci...210..801S. doi:10.1126/science.7433999. JSTOR 1684570. PMID 7433999. Retrieved 2020-11-30.

- ^ Sedivy, Julie (2020). "2. Origins of Human Language". Language in Mind: An introduction to psycholinguistics (Second ed.). New York: Oxford University Press.

- ^ Wallin, N. L; Merker, B.; Brown, S. (2001). "3. Origins of Music and Speech: Insights from Animals". The Origins of Music. Vol. 18. University of California Press. pp. 513–521. doi:10.1525/mp.2001.18.4.513. JSTOR 10.1525/mp.2001.18.4.513. Retrieved 2020-12-02.

{{cite book}}:|journal=ignored (help) - ^ a b c d e f g h Nóbrega, Vitor A.; Miyagawa, Shigeru (18 March 2015). "The precedence of syntax in the rapid emergence of human language in evolution as defined by the integration hypothesis". Frontiers in Psychology. 6: 271. doi:10.3389/fpsyg.2015.00271. PMC 4364162. PMID 25852595.

- ^ Progovac, Ljiljana (2015-06-01). Evolutionary Syntax. Oxford University Press. doi:10.1093/acprof:oso/9780198736547.001.0001. ISBN 978-0-19-873654-7.

- ^ Jackendoff, Ray (2011-07-07). "Compounding in the Parallel Architecture and Conceptual Semantics". Oxford Handbooks Online. doi:10.1093/oxfordhb/9780199695720.013.0006.

- ^ a b c Anna Maria Di Sciullo (November 2013). "Exocentric Compounds, Language and Proto-language". Language & Information Society. 20: 1–25. doi:10.29211/soli.2013.20..001. ISSN 1598-1886.

- ^ a b c d e f Miyagawa, Shigeru; Berwick, Robert; Okanoya, Kazuo (2013). "The emergence of hierarchical structure in human language". Frontiers in Psychology. 4: 71. doi:10.3389/fpsyg.2013.00071. PMC 3577014. PMID 23431042.

- ^ a b Chomsky, Noam. "New Horizons in the Study of Language and Mind". Cambridge, MA: Cambridge University Press. Retrieved 2020-11-30.

- ^ Velmezova, Ekaterina; Kull, Kalevi; Cowley, Stephen (eds.) 2015. Biosemiotic Perspectives on Language and Linguistics. (Biosemiotics 13.) Cham: Springer.

- ^ a b Martins, Pedro Tiago; Boeckx, Cedric (2016-12-01). "What we talk about when we talk about biolinguistics". Linguistics Vanguard. -1 (open–issue). doi:10.1515/lingvan-2016-0007. S2CID 131936047.

- ^ Poeppel, David; Embick, David (2005). "Defining the Relation Between Linguistics and Neuroscience". In Anne Cutler (ed.). Twenty-First Century Psycholinguistics: Four Cornerstones. Lawrence Erlbaum.

- ^ Mondal, Prakash. 2019: Language, Biology, and Cognition: A Critical Perspective.[1]. Berlin/New York: Springer Nature.

- ^ Mondal, Prakash. 2022: A Critical Perspective on the (Neuro)biological Foundations of Language and Linguistic Cognition. Integrative Psychological and Behavioral Science: https://doi.org/10.1007/s12124-022-09741-0

- ^ Lieberman, Philip (1984). The Biology and Evolution of Language. Harvard University.

- ^ Sciullo, Anna Maria Di; Jenkins, Lyle (September 2016). "Biolinguistics and the human language faculty". Language. 92 (3): e205–e236. doi:10.1353/lan.2016.0056. S2CID 151533338.

- ^ Hickok, Greg (6 September 2009). "The functional neuroanatomy of language". Physics of Life Reviews. 6 (3): 121–43. Bibcode:2009PhLRv...6..121H. doi:10.1016/j.plrev.2009.06.001. PMC 2747108. PMID 20161054.

- ^ Jenkins, Lyle (2000). Biolinguistics: Exploring the biology of language. New York, NY: Cambridge University Press. pp. 76–108.

- ^ Trudgill, Peter (2004). "The impact of language contact and social structure on linguistic structure: Focus on the dialects of modern Greek". Dialectology Meets Typology: Dialect Grammar from a Cross-linguistic Perspective. 153: 435–452.

- ^ Tallerman, Maggie (August 2017). "Can the integration hypothesis account for language evolution?". Journal of Neurolinguistics. 43: 254–262. doi:10.1016/j.jneuroling.2016.06.006. S2CID 53188478. Retrieved 2020-11-16.

- ^ Miyagawa, S. (2010). Why Agree? Why Move?: Unifying Agreement-Based and Discourse-Configurational Languages. Linguistic Inquiry Monographs. MIT Press. ISBN 9780262013611.

{{cite book}}:|website=ignored (help) - ^ Chomsky, N. (1995). The Minimalist Program. Cambridge, MA: The MIT Press.

- ^ Hale, Kenneth L.; Keyser, Samuel Jay; Bromberger, Sylvain (1993). The View from building 20 : essays in linguistics in honor of Sylvain Bromberger. Cambridge, Mass: MIT Press.

- ^ Sedivy, Julie (2020). Language in Mind: An introduction to psycholinguistics (Second ed.). New York: Oxford University Press.

Conferences

[edit]- Biolinguistic Investigations Conference, Santo Domingo, Dominican Republic, February 2007.

- Conference on Biolinguistics: Language Evolution and Variation, Università di Venezia, June 2007.

- ICREA International Symposium in Biolinguistics, Universitat de Barcelona, October 2012.

- The Journal of Biolinguistics

External links

[edit] Media related to Biolinguistics at Wikimedia Commons

Media related to Biolinguistics at Wikimedia Commons- Biolinguistics Journal