Mind uploading

| Part of a series on |

| Cyborgs |

|---|

|

| Cyborgology |

| Theory |

| Centers |

| Politics |

| Related articles |

Mind uploading is a speculative process of whole brain emulation in which a brain scan is used to completely emulate the mental state of the individual in a digital computer. The computer would then run a simulation of the brain's information processing, such that it would respond in essentially the same way as the original brain and experience having a sentient conscious mind.[1][2][3]

Substantial mainstream research in related areas is being conducted in neuroscience and computer science, including animal brain mapping and simulation,[4] development of faster supercomputers, virtual reality, brain–computer interfaces, connectomics, and information extraction from dynamically functioning brains.[5] According to supporters, many of the tools and ideas needed to achieve mind uploading already exist or are under active development; however, they will admit that others are, as yet, very speculative, but say they are still in the realm of engineering possibility.

Mind uploading may potentially be accomplished by either of two methods: copy-and-upload or copy-and-delete by gradual replacement of neurons (which can be considered as a gradual destructive uploading), until the original organic brain no longer exists and a computer program emulating the brain takes control of the body. In the case of the former method, mind uploading would be achieved by scanning and mapping the salient features of a biological brain, and then by storing and copying that information state into a computer system or another computational device. The biological brain may not survive the copying process or may be deliberately destroyed during it in some variants of uploading. The simulated mind could be within a virtual reality or simulated world, supported by an anatomic 3D body simulation model. Alternatively, the simulated mind could reside in a computer inside—or either connected to or remotely controlled by—a (not necessarily humanoid) robot, biological, or cybernetic body.[6]

Among some futurists and within part of transhumanist movement, mind uploading is treated as an important proposed life extension or immortality technology (known as "digital immortality"). Some believe mind uploading is humanity's current best option for preserving the identity of the species, as opposed to cryonics. Another aim of mind uploading is to provide a permanent backup to our "mind-file", to enable interstellar space travel, and a means for human culture to survive a global disaster by making a functional copy of a human society in a computing device. Whole-brain emulation is discussed by some futurists as a "logical endpoint"[6] of the topical computational neuroscience and neuroinformatics fields, both about brain simulation for medical research purposes. It is discussed in artificial intelligence research publications as an approach to strong AI (artificial general intelligence) and to at least weak superintelligence. Another approach is seed AI, which would not be based on existing brains. Computer-based intelligence such as an upload could think much faster than a biological human even if it were no more intelligent. A large-scale society of uploads might, according to futurists, give rise to a technological singularity, meaning a sudden time constant decrease in the exponential development of technology.[7] Mind uploading is a central conceptual feature of numerous science fiction novels, films, and games.[8]

Overview

[edit]Many neuroscientists believe that the human mind is largely an emergent property of the information processing of its neuronal network.[9]

Neuroscientists have stated that important functions performed by the mind, such as learning, memory, and consciousness, are due to purely physical and electrochemical processes in the brain and are governed by applicable laws. For example, Christof Koch and Giulio Tononi wrote in IEEE Spectrum:

Consciousness is part of the natural world. It depends, we believe, only on mathematics and logic and on the imperfectly known laws of physics, chemistry, and biology; it does not arise from some magical or otherworldly quality.[10]

Eminent computer scientists and neuroscientists have predicted that advanced computers will be capable of thought and even attain consciousness, including Koch and Tononi,[10] Douglas Hofstadter,[11] Jeff Hawkins,[11] Marvin Minsky,[12] Randal A. Koene, and Rodolfo Llinás.[13]

Many theorists have presented models of the brain and have established a range of estimates of the amount of computing power needed for partial and complete simulations.[4][6] Using these models, some have estimated that uploading may become possible within decades if trends such as Moore's law continue.[14] As of December 2022, this kind of technology is almost entirely theoretical.

Theoretical benefits and applications

[edit]"Immortality" or backup

[edit]In theory, if the information and processes of the mind can be disassociated from the biological body, they are no longer tied to the individual limits and lifespan of that body. Furthermore, information within a brain could be partly or wholly copied or transferred to one or more other substrates (including digital storage or another brain), thereby—from a purely mechanistic perspective—reducing or eliminating "mortality risk" of such information. This general proposal was discussed in 1971 by biogerontologist George M. Martin of the University of Washington.[15] This questions the concept of identity. From the perspective of the biological brain, the simulated brain may just be a copy, even if it is conscious and has an indistinguishable character. As such, the original biological being, before the uploading, might consider the digital twin to be a new and independent being rather than the future self.[16]

Space exploration

[edit]An "uploaded astronaut" could be used instead of a "live" astronaut in human spaceflight, avoiding the perils of zero gravity, the vacuum of space, and cosmic radiation to the human body. It would allow for the use of smaller spacecraft, such as the proposed StarChip, and it would enable virtually unlimited interstellar travel distances.[17]

Mind editing

[edit]While some researchers believe editing human brains to be physically possible in theory, for example by performing neurosurgery with nanobots, it would require particularly advanced technology. Editing an uploaded mind would be much easier, as long as the exact edits to be made are known.[18] This would facilitate cognitive enhancement and the precise control of the well-being, motivations or personality of the emulated beings.[19]

Speed

[edit]Although the number of neuronal connections in the human brain is very significant (around 100 trillions[20]), the frequency of activation of biological neurons is limited to around 200 Hz, whereas electronic hardware can easily operate at multiple GHz. With sufficient hardware parallelism, a simulated brain could thus in theory be made to run faster than a biological brain. Uploaded beings may therefore not only be more efficient, but also supposedly have a faster rate of subjective experience than biological brains (e.g. experiencing an hour of lifetime in a single second of real time).[21]

Relevant technologies and techniques

[edit]The focus of mind uploading, in the case of copy-and-transfer, is on data acquisition, rather than data maintenance of the brain. A set of approaches known as loosely coupled off-loading (LCOL) may be used in the attempt to characterize and copy the mental contents of a brain.[22] The LCOL approach may take advantage of self-reports, life-logs and video recordings that can be analyzed by artificial intelligence. A bottom-up approach may focus on the specific resolution and morphology of neurons, the spike times of neurons, the times at which neurons produce action potential responses.

Computational complexity

[edit]

Advocates of mind uploading point to Moore's law to support the notion that the necessary computing power is expected to become available within a few decades. However, the actual computational requirements for running an uploaded human mind are very difficult to quantify, potentially rendering such an argument specious.

Regardless of the techniques used to capture or recreate the function of a human mind, the processing demands are likely to be immense, due to the large number of neurons in the human brain along with the considerable complexity of each neuron.

Required computational capacity strongly depends on the chosen level of simulation model scale:[6]

| Level | CPU demand (FLOPS) |

Memory demand (Tb) |

$1 million super‐computer (Earliest year of making) |

|---|---|---|---|

| Analog network population model | 1015 | 102 | 2008 |

| Spiking neural network | 1018 | 104 | 2019 |

| Electrophysiology | 1022 | 104 | 2033 |

| Metabolome | 1025 | 106 | 2044 |

| Proteome | 1026 | 107 | 2048 |

| States of protein complexes | 1027 | 108 | 2052 |

| Distribution of complexes | 1030 | 109 | 2063 |

| Stochastic behavior of single molecules | 1043 | 1014 | 2111 |

Scanning and mapping scale of an individual

[edit]When modelling and simulating the brain of a specific individual, a brain map or connectivity database showing the connections between the neurons must be extracted from an anatomic model of the brain. For whole brain simulation, this network map should show the connectivity of the whole nervous system, including the spinal cord, sensory receptors, and muscle cells. Destructive scanning of a small sample of tissue from a mouse brain including synaptic details is possible as of 2010.[24]

However, if short-term memory and working memory include prolonged or repeated firing of neurons, as well as intra-neural dynamic processes, the electrical and chemical signal state of the synapses and neurons may be hard to extract. The uploaded mind may then perceive a memory loss of the events and mental processes immediately before the time of brain scanning.[6]

A full brain map has been estimated to occupy less than 2 x 1016 bytes (20,000 TB) and would store the addresses of the connected neurons, the synapse type and the synapse "weight" for each of the brains' 1015 synapses.[6][failed verification] However, the biological complexities of true brain function (e.g. the epigenetic states of neurons, protein components with multiple functional states, etc.) may preclude an accurate prediction of the volume of binary data required to faithfully represent a functioning human mind.

Serial sectioning

[edit]

A possible method for mind uploading is serial sectioning, in which the brain tissue and perhaps other parts of the nervous system are frozen and then scanned and analyzed layer by layer, which for frozen samples at nano-scale requires a cryo-ultramicrotome, thus capturing the structure of the neurons and their interconnections.[25][26] The exposed surface of frozen nerve tissue would be scanned and recorded, and then the surface layer of tissue removed. While this would be a very slow and labor-intensive process, research is underway to automate the collection and microscopy of serial sections.[27] The scans would then be analyzed, and a model of the neural net recreated in the system into which the mind was being uploaded.

There are uncertainties with this approach using current microscopy techniques. If it is possible to replicate neuron function from its visible structure alone, then the resolution afforded by a scanning electron microscope would suffice for such a technique.[27] However, as the function of brain tissue is partially determined by molecular events (particularly at synapses, but also at other places on the neuron's cell membrane), this may not suffice for capturing and simulating neuron functions. It may be possible to extend the techniques of serial sectioning and to capture the internal molecular makeup of neurons, through the use of sophisticated immunohistochemistry staining methods that could then be read via confocal laser scanning microscopy. However, as the physiological genesis of 'mind' is not currently known, this method may not be able to access all of the necessary biochemical information to recreate a human brain with sufficient fidelity.

Brain imaging

[edit]

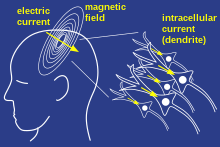

It may be possible to create functional 3D maps of the brain activity, using advanced neuroimaging technology, such as functional MRI (fMRI, for mapping change in blood flow), magnetoencephalography (MEG, for mapping of electrical currents), or combinations of multiple methods, to build a detailed three-dimensional model of the brain using non-invasive and non-destructive methods. Today, fMRI is often combined with MEG for creating functional maps of human cortex during more complex cognitive tasks, as the methods complement each other. Even though current imaging technology lacks the spatial resolution needed to gather the information needed for such a scan, important recent and future developments are predicted to substantially improve both spatial and temporal resolutions of existing technologies.[29]

Brain simulation

[edit]Ongoing work in the field of brain simulation includes partial and whole simulations of some animals.[4] For example, the C. elegans roundworm,[30] Drosophila fruit fly,[31] and mouse[32] have all been simulated to various degrees.

The Blue Brain Project, initiated by the Brain and Mind Institute of the École Polytechnique Fédérale de Lausanne in Switzerland, is an attempt to create a synthetic brain by reverse-engineering mammalian brain circuitry, in order to accelerate experimental research on the brain.[33] In 2009, after a successful simulation of part of a rat brain, the director Henry Markram claimed that "A detailed, functional artificial human brain can be built within the next 10 years".[34] In 2013, Markram became the director of the new decade-long Human Brain Project. But less than two years into it, the project was recognized to be mismanaged and its claims overblown, and Markram was asked to step down.[35][36]

Issues

[edit]Philosophical issues

[edit]The main philosophical problem faced by "mind uploading" or mind copying is the hard problem of consciousness: the difficulty of explaining how a physical entity such as a human can have qualia, phenomenal consciousness, or subjective experience.[37] Many philosophical responses to the hard problem entail that mind uploading is fundamentally or practically impossible, while others are compatible with at least some formulations of mind uploading. Many proponents of mind uploading defend the possibility of mind uploading by recourse to physicalism, which includes the philosophical belief that consciousness is an emergent feature that arises from large neural network high-level patterns of organization, which could be realized in other processing devices. Mind uploading relies on the idea that the human mind (the "self" and the long-term memory) reduces to the current neural network paths and the weights of synapses in the brain. In contrast, many dualistic and idealistic accounts seek to avoid the hard problem of consciousness by explaining it in terms of immaterial (and presumably inaccessible) substances like soul, which would pose a fundamental or at least practical challenge to the feasibility of artificial consciousness in general.[38]

Assuming physicalism is true, the mind can be defined as the information state of the brain, so it is immaterial only in the same sense as the information content of a data file, or the state of software residing in a computer's memory. In this case, data specifying the information state of the neural network could be captured and copied as a "computer file" from the brain and re-implemented into a different physical form.[39] This is not to deny that minds are richly adapted to their substrates.[40] An analogy to mind uploading is to copy the information state of a computer program from the memory of the computer on which it is executing to another computer and then continue its execution on the second computer. The second computer may perhaps have different hardware architecture, but it emulates the hardware of the first computer.

These philosophical issues have a long history. In 1775, Thomas Reid wrote: “I would be glad to know... whether when my brain has lost its original structure, and when some hundred years after the same materials are fabricated so curiously as to become an intelligent being, whether, I say that being will be me; or, if, two or three such beings should be formed out of my brain; whether they will all be me, and consequently one and the same intelligent being.”[41] Although the name of the hard problem of consciousness was coined in 1994, debate surrounding the problem itself is ancient. Augustine of Hippo argued against physicalist "Academians" in the 5th century, writing that consciousness cannot be an illusion because only a conscious being can be deceived or experience an illusion.[42] René Descartes, the founder of mind-body dualism, made a similar objection in the 17th century, coining the popular phrase "Je pense, donc je suis" ("I think, therefore I am").[43] Although physicalism is known to have been proposed in ancient times, Thomas Huxley was among the first to describe mental experience as merely an epiphenomenon of interactions within the brain, having no causal power of its own and being entirely downstream from the brain's activity.[44]

A considerable portion of transhumanists and singularitarians place great hope in the belief that they may become immortal, by creating one or many non-biological functional copies of their brains, thereby leaving their "biological shell". However, the philosopher and transhumanist Susan Schneider claims that at best, uploading would create a copy of the original person's mind.[45] Schneider agrees that consciousness has a computational basis, but this does not mean we can upload and survive. According to her views, "uploading" would probably result in the death of the original person's brain, while only outside observers can maintain the illusion of the original person still being alive. For it is implausible to think that one's consciousness would leave one's brain and travel to a remote location; ordinary physical objects do not behave this way. Ordinary objects (rocks, tables, etc.) are not simultaneously here, and elsewhere. At best, a copy of the original mind is created.[45] Neural correlates of consciousness, a sub-branch of neuroscience, states that consciousness may be thought of as a state-dependent property of some undefined complex, adaptive, and highly interconnected biological system.[46]

Others have argued against such conclusions. For example, Buddhist transhumanist James Hughes has pointed out that this consideration only goes so far: if one believes the self is an illusion, worries about survival are not reasons to avoid uploading,[47] and Keith Wiley has presented an argument wherein all resulting minds of an uploading procedure are granted equal primacy in their claim to the original identity, such that survival of the self is determined retroactively from a strictly subjective position.[48][49] Some have also asserted that consciousness is a part of an extra-biological system that is yet to be discovered; therefore it cannot be fully understood under the present constraints of neurobiology. Without the transference of consciousness, true mind-upload or perpetual immortality cannot be practically achieved.[50]

Another potential consequence of mind uploading is that the decision to "upload" may then create a mindless symbol manipulator instead of a conscious mind (see philosophical zombie).[51][52] If a computer could process sensory inputs to generate the same outputs that a human mind does (speech, muscle movements, etc.) without necessarily having any experience of consciousness, then it may be impossible to determine whether the uploaded mind is truly conscious, and not merely an automaton that externally behaves the way a human would. Thought experiments like the Chinese room raise fundamental questions about mind uploading: If an upload displays behaviors that are highly indicative of consciousness, or even verbally insists that it is conscious, does that prove it is conscious?[53] There might also be an absolute upper limit in processing speed, above which consciousness cannot be sustained. The subjectivity of consciousness precludes a definitive answer to this question.[54]

Numerous scientists, including Ray Kurzweil, believe that whether a separate entity is conscious is impossible to know with confidence, since consciousness is inherently subjective (see also: solipsism). Regardless, some scientists believe consciousness is the consequence of computational processes which are substrate-neutral. Still other scientists, prominent among them Roger Penrose, believe consciousness may emerge from some form of quantum computation that is dependent on the organic substrate (see quantum mind).[55][56][57]

In light of uncertainty about whether mind uploads are conscious, Sandberg proposes a cautious approach:[58]

Principle of assuming the most (PAM): Assume that any emulated system could have the same mental properties as the original system and treat it correspondingly.

Ethical and legal implications

[edit]The process of developing emulation technology raises ethical issues related to animal welfare and artificial consciousness.[58] The neuroscience required to develop brain emulation would require animal experimentation, first on invertebrates and then on small mammals before moving on to humans. Sometimes the animals would just need to be euthanized in order to extract, slice, and scan their brains, but sometimes behavioral and in vivo measures would be required, which might cause pain to living animals.[58]

In addition, the resulting animal emulations themselves might suffer, depending on one's views about consciousness.[58] Bancroft argues for the plausibility of consciousness in brain simulations on the basis of the "fading qualia" thought experiment of David Chalmers. He then concludes:[59] “If, as I argue above, a sufficiently detailed computational simulation of the brain is potentially operationally equivalent to an organic brain, it follows that we must consider extending protections against suffering to simulations.” Chalmers himself has argued that such virtual realities would be genuine realities.[60] However, if mind uploading occurs and the uploads are not conscious, there may be a significant opportunity cost. In the book Superintelligence, Nick Bostrom expresses concern that we could build a "Disneyland without children."[61]

It might help reduce emulation suffering to develop virtual equivalents of anaesthesia, as well as to omit processing related to pain and/or consciousness. However, some experiments might require a fully functioning and suffering animal emulation. Animals might also suffer by accident due to flaws and lack of insight into what parts of their brains are suffering.[58] Questions also arise regarding the moral status of partial brain emulations, as well as creating neuromorphic emulations that draw inspiration from biological brains but are built somewhat differently.[59]

Brain emulations could be erased by computer viruses or malware, without the need to destroy the underlying hardware. This may make assassination easier than for physical humans. The attacker might take the computing power for its own use.[62]

Many questions arise regarding the legal personhood of emulations.[63] Would they be given the rights of biological humans? If a person makes an emulated copy of themselves and then dies, does the emulation inherit their property and official positions? Could the emulation ask to "pull the plug" when its biological version was terminally ill or in a coma? Would it help to treat emulations as adolescents for a few years so that the biological creator would maintain temporary control? Would criminal emulations receive the death penalty, or would they be given forced data modification as a form of "rehabilitation"? Could an upload have marriage and child-care rights?[63]

If simulated minds would come true and if they were assigned rights of their own, it may be difficult to ensure the protection of "digital human rights". For example, social science researchers might be tempted to secretly expose simulated minds, or whole isolated societies of simulated minds, to controlled experiments in which many copies of the same minds are exposed (serially or simultaneously) to different test conditions.[citation needed]

Research led by cognitive scientist Michael Laakasuo has shown that attitudes towards mind uploading are predicted by an individual's belief in an afterlife; the existence of mind uploading technology may threaten religious and spiritual notions of immortality and divinity.[64]

Political and economic implications

[edit]This section needs to be updated. The reason given is: may not be relevant anymore, considering recent progress in large multimodal models. (June 2024) |

Emulations might be preceded by a technological arms race driven by first-strike advantages. Their emergence and existence may lead to increased risk of war, including inequality, power struggles, strong loyalty and willingness to die among emulations, and new forms of racism, xenophobia, and religious prejudice.[65][62] If emulations run much faster than humans, there might not be enough time for human leaders to make wise decisions or negotiate. It is possible that humans would react violently against the growing power of emulations, especially if that depresses human wages. Emulations may not trust each other, and even well-intentioned defensive measures might be interpreted as offense.[62]

The book The Age of Em by Robin Hanson poses many hypotheses on the nature of a society of mind uploads, including that the most common minds would be copies of adults with personalities conducive to long hours of productive specialized work.[66]

Emulation timelines and AI risk

[edit]This section needs to be updated. The reason given is: most sources are more than 10 years old, and may not reflect the current state of the debate. (June 2024) |

Kenneth D. Miller, a professor of neuroscience at Columbia University and a co-director of the Center for Theoretical Neuroscience, raised doubts about the practicality of mind uploading. His major argument is that reconstructing neurons and their connections is in itself a formidable task, but it is far from being sufficient. Operation of the brain depends on the dynamics of electrical and biochemical signal exchange between neurons; therefore, capturing them in a single "frozen" state may prove insufficient. In addition, the nature of these signals may require modeling at the molecular level and beyond. Therefore, while not rejecting the idea in principle, Miller believes that the complexity of the "absolute" duplication of an individual mind is insurmountable for the nearest hundreds of years.[67]

There are very few feasible technologies that humans have refrained from developing. The neuroscience and computer-hardware technologies that may make brain emulation possible are widely desired for other reasons, and logically their development will continue into the future. We may also have brain emulations for a brief but significant period on the way to non-emulation based human-level AI.[66] Assuming that emulation technology will arrive, a question becomes whether we should accelerate or slow its advance.[62]

Arguments for speeding up brain-emulation research:

- If neuroscience is the bottleneck on brain emulation rather than computing power, emulation advances may be more erratic and unpredictable based on when new scientific discoveries happen.[62][68][69] Limited computing power would mean the first emulations would run slower and so would be easier to adapt to, and there would be more time for the technology to transition through society.[69]

- Improvements in manufacturing, 3D printing, and nanotechnology may accelerate hardware production,[62] which could increase the "computing overhang"[70] from excess hardware relative to neuroscience.

- If one AI-development group had a lead in emulation technology, it would have more subjective time to win an arms race to build the first superhuman AI. Because it would be less rushed, it would have more freedom to consider AI risks.[71][72]

Arguments for slowing brain-emulation research:

- Greater investment in brain emulation and associated cognitive science might enhance the ability of artificial intelligence (AI) researchers to create "neuromorphic" (brain-inspired) algorithms, such as neural networks, reinforcement learning, and hierarchical perception. This could accelerate risks from uncontrolled AI.[62][72] Participants at a 2011 AI workshop estimated an 85% probability that neuromorphic AI would arrive before brain emulation. This was based on the idea that brain emulation would require understanding of the workings and functions of the different brain components, along with the technological know-how to emulate neurons. To counter this idea, reverse engineering the Microsoft Windows code base is already hard, so reverse engineering the brain would likely be much harder. By a very narrow margin, the participants on balance leaned toward the view that accelerating brain emulation would increase expected AI risk.[71]

- Waiting might give society more time to think about the consequences of brain emulation and develop institutions to improve cooperation.[62][72]

Emulation research would also accelerate neuroscience as a whole, which might accelerate medical advances, cognitive enhancement, lie detectors, and capability for psychological manipulation.[72]

Emulations might be easier to control than de novo AI because:

- Human abilities, behavioral tendencies, and vulnerabilities are more thoroughly understood, thus control measures might be more intuitive and easier to plan.[71][72]

- Emulations could more easily inherit human motivations.[72]

- Emulations are harder to manipulate than de novo AI, because brains are messy and complicated; this could reduce risks of their rapid takeoff.[62][72] Also, emulations may be bulkier and require more hardware than AI, which would also slow the speed of a transition.[72] Unlike AI, an emulation would not be able to rapidly expand beyond the size of a human brain.[72] Emulations running at digital speeds would have less intelligence differential vis-à-vis AI and so might more easily control AI.[72]

As counterpoint to these considerations, Bostrom notes some downsides:

- Even if we better understand human behavior, the evolution of emulation behavior under self-improvement might be much less predictable than the evolution of safe de novo AI under self-improvement.[72]

- Emulations may not inherit all human motivations. Perhaps they would inherit our darker motivations or would behave abnormally in the unfamiliar environment of cyberspace.[72]

- Even if there is a slow takeoff toward emulations, there would still be a second transition to de novo AI later on. Two intelligence explosions may mean more total risk.[72]

Because of the postulated difficulties that a whole brain emulation-generated superintelligence would pose for the control problem, computer scientist Stuart J. Russell in his book Human Compatible rejects creating one, simply calling it "so obviously a bad idea".[73]

Advocates

[edit]In 1979, Hans Moravec (1979) described and endorsed mind uploading using a brain surgeon.[74] Moravec used a similar description in 1988, calling it "transmigration".[75]

Ray Kurzweil, director of engineering at Google, has long predicted that people will be able to "upload" their entire brains to computers and become "digitally immortal" by 2045. Kurzweil made this claim for many years, e.g. during his speech in 2013 at the Global Futures 2045 International Congress in New York, which claims to subscribe to a similar set of beliefs.[76] Mind uploading has also been advocated by a number of researchers in neuroscience and artificial intelligence, such as Marvin Minsky.[citation needed] In 1993, Joe Strout created a small web site called the Mind Uploading Home Page, and began advocating the idea in cryonics circles and elsewhere on the net. That site has not been actively updated in recent years, but it has spawned other sites including MindUploading.org, run by Randal A. Koene, who also moderates a mailing list on the topic. These advocates see mind uploading as a medical procedure which could eventually save countless lives.

Many transhumanists look forward to the development and deployment of mind uploading technology, with transhumanists such as Nick Bostrom predicting that it will become possible within the 21st century due to technological trends such as Moore's law.[6]

Michio Kaku, in collaboration with Science, hosted a documentary, Sci Fi Science: Physics of the Impossible, based on his book Physics of the Impossible. Episode four, titled "How to Teleport", mentions that mind uploading via techniques such as quantum entanglement and whole brain emulation using an advanced MRI machine may enable people to be transported vast distances at near light-speed.

The book Beyond Humanity: CyberEvolution and Future Minds by Gregory S. Paul & Earl D. Cox, is about the eventual (and, to the authors, almost inevitable) evolution of computers into sentient beings, but also deals with human mind transfer. Richard Doyle's Wetwares: Experiments in PostVital Living deals extensively with uploading from the perspective of distributed embodiment, arguing for example that humans are currently part of the "artificial life phenotype". Doyle's vision reverses the polarity on uploading, with artificial life forms such as uploads actively seeking out biological embodiment as part of their reproductive strategy.

In fiction

[edit]Mind uploading—transferring an individual's personality to a computer—appears in several works of science fiction.[77] It is distinct from the concept of transferring a consciousness from one human body to another.[78][79] It is sometimes applied to a single person and other times to an entire society.[80] Recurring themes in these stories include whether the computerized mind is truly conscious, and if so, whether identity is preserved.[81] It is a common feature of the cyberpunk subgenre,[82] sometimes taking the form of digital immortality.[79][83]

See also

[edit]- BRAIN Initiative

- Brain transplant

- Brain-reading

- Cyborg

- Cylon (reimagining)

- Democratic transhumanism

- Human Brain Project

- Isolated brain

- Neuralink

- Open individualism

- Posthumanization

- Robotoid

- Ship of Theseus—thought experiment asking if objects having all parts replaced fundamentally remain the same object

- Simulation hypothesis

- Technologically enabled telepathy

- Teletransportation paradox

- Thought recording and reproduction device

- Turing test

- The Future of Work and Death

- Vertiginous question

- Chinese room

- 2045 Initiative

- Dmitry Itskov

- Miguel Nicolelis

- Neural network (machine learning)

References

[edit]- ^ Bamford, Sim (2012). "A framework for approaches to transfer of a mind's substrate" (PDF). International Journal of Machine Consciousness. 04 (1): 23–34. doi:10.1142/s1793843012400021. ISSN 1793-8430.

- ^ Goertzel, Ben; Ikle', Matthew (2012). "Introduction". International Journal of Machine Consciousness. 04: 1–3. doi:10.1142/S1793843012020015.

- ^ Sotala, Kaj; Valpola, Harri (June 2012). "Coalescing minds: brain uploading-related group mind scenarios" (PDF). International Journal of Machine Consciousness. 04 (1): 293–312. doi:10.1142/S1793843012400173. S2CID 6230653.

- ^ a b c Fan, Xue; Markram, Henry (2019-05-07). "A Brief History of Simulation Neuroscience". Frontiers in Neuroinformatics. 13: 32. doi:10.3389/fninf.2019.00032. ISSN 1662-5196. PMC 6513977. PMID 31133838.

- ^ Kay KN, Naselaris T, Prenger RJ, Gallant JL (March 2008). "Identifying natural images from human brain activity". Nature. 452 (7185): 352–5. Bibcode:2008Natur.452..352K. doi:10.1038/nature06713. PMC 3556484. PMID 18322462.

- ^ a b c d e f g

Sandberg, Anders; Boström, Nick (2008). Whole Brain Emulation: A Roadmap (PDF). Technical Report #2008-3. Future of Humanity Institute, Oxford University. Retrieved 5 April 2009.

The basic idea is to take a particular brain, scan its structure in detail, and construct a software model of it that is so faithful to the original that, when run on appropriate hardware, it will behave in essentially the same way as the original brain.

- ^ Goertzel, Ben (December 2007). "Human-level artificial general intelligence and the possibility of a technological singularity: a reaction to Ray Kurzweil's The Singularity Is Near, and McDermott's critique of Kurzweil". Artificial Intelligence. 171 (18, Special Review Issue): 1161–1173. doi:10.1016/j.artint.2007.10.011.

- ^ "SFE: Upload". sf-encyclopedia.com. Retrieved 2024-03-24.

- ^ Hopfield, J. J. (1982-04-01). "Neural networks and physical systems with emergent collective computational abilities". Proceedings of the National Academy of Sciences. 79 (8): 2554–2558. Bibcode:1982PNAS...79.2554H. doi:10.1073/pnas.79.8.2554. ISSN 0027-8424. PMC 346238. PMID 6953413.

- ^ a b Koch, Christof; Tononi, Giulio (2008). "Can machines be conscious?" (PDF). IEEE Spectrum. 45 (6): 55. doi:10.1109/MSPEC.2008.4531463. S2CID 7226896.

- ^ a b "Tech Luminaries Address Singularity". IEEE. Archived from the original on 2009-05-01. Retrieved 2009-04-02.

- ^ Minsky, Marvin (June 1991). "Conscious Machines". Machinery of Consciousness. Proceedings, National Research Council of Canada, 75th Anniversary Symposium on Science in Society.

- ^ Llinas, R (2001). I of the vortex: from neurons to self. Cambridge: MIT Press. pp. 261–262. ISBN 978-0-262-62163-2.

- ^ Kurzweil, Ray (February 2000). "Live Forever–Uploading The Human Brain...Closer Than You Think". Psychology Today.

- ^ Martin, G. M. (1971). "Brief proposal on immortality: an interim solution". Perspectives in Biology and Medicine. 14 (2): 339–340. doi:10.1353/pbm.1971.0015. PMID 5546258. S2CID 71120068.

- ^ Rosenberg, Louis (2022-08-13). "Identity crisis: Artificial intelligence and the flawed logic of 'mind uploading'". VentureBeat. Retrieved 2024-05-10.

- ^ Prisco, Giulio (12 December 2012). "Uploaded e-crews for interstellar missions". kurzweilai.net. Retrieved 31 July 2015.

- ^ Bostrom, Nick (March 27, 2024). "Handout 10: downloading and brain editing". Deep Utopia: Life and Meaning in a Solved World. Ideapress. ISBN 978-1646871643.

- ^ Bostrom, Nick (March 27, 2024). "Handout 2: Some capabilities at technological maturity". Deep Utopia: Life and Meaning in a Solved World. Ideapress. ISBN 978-1646871643.

- ^ Caruso, Catherine (January 19, 2023). "A New Field of Neuroscience Aims to Map Connections in the Brain". Harvard medical school. Retrieved 2024-05-10.

- ^ Bostrom, Nick (2017). "Speed superintelligence". Superintelligence: paths, dangers, strategies. Oxford University Press. ISBN 978-0-19-967811-2.

- ^ "Substrate-Independent Minds". carboncopies.org. Carboncopies.org Foundation. Archived from the original on 2014-01-03. Retrieved 2014-01-03.

- ^ Roadmap. "Given the complexities and conceptual issues of consciousness we will not examine criteria 6abc, but mainly examine achieving criteria 1–5." p. 11.

- ^ "New imaging method developed at Stanford reveals stunning details of brain connections". Stanford Medicine. 21 December 2009.

- ^ Merkle, R., 1989, Large scale analysis of neural structures Archived 2012-02-04 at the Wayback Machine, CSL-89-10 November 1989, [P89-00173]

- ^ Chalmers, David J. (2014-06-13). "Uploading: A Philosophical Analysis". Intelligence Unbound. pp. 102–118. doi:10.1002/9781118736302.ch6. ISBN 9781118736418.

- ^ a b ATLUM Project, Archived 2008-02-01 at the Wayback Machine.

- ^ Hagmann, Patric; Cammoun, Leila; Gigandet, Xavier; Meuli, Reto; Honey, Christopher J.; Wedeen, Van J.; Sporns, Olaf; Friston, Karl J. (2008). Friston, Karl J. (ed.). "Mapping the Structural Core of Human Cerebral Cortex". PLOS Biology. 6 (7): e159. doi:10.1371/journal.pbio.0060159. PMC 2443193. PMID 18597554.

- ^ Glover, Paul; Bowtell, Richard (2009). "Medical imaging: MRI rides the wave". Nature. 457 (7232): 971–972. Bibcode:2009Natur.457..971G. doi:10.1038/457971a. PMID 19225512. S2CID 205044426.

- ^ Niebur, E.; Erdös, P. (November 1993). "Theory of the locomotion of nematodes: control of the somatic motor neurons by interneurons". Mathematical Biosciences. 118 (1): 51–82. doi:10.1016/0025-5564(93)90033-7. ISSN 0025-5564. PMID 8260760.

- ^ Arena, Paolo; Patane, Luca; Termini, Pietro Savio (2010). "An insect brain computational model inspired by Drosophila melanogaster: Simulation results". The 2010 International Joint Conference on Neural Networks (IJCNN). pp. 1–8. doi:10.1109/IJCNN.2010.5596513. ISBN 978-1-4244-6916-1.

{{cite book}}:|journal=ignored (help) - ^ "Mouse brain simulated on computer". BBC. 2007-04-27. Retrieved 2024-05-10.

- ^ "Bluebrain - EPFL". EPFL. 19 May 2015. Archived from the original on 2006-01-14.

Once this replica is built, we will be able to do experiments that normally take us years or are prohibitively expensive or too difficult to perform. This will greatly accelerate the pace of research.

- ^ Fildes, Jonathan (22 July 2009). "Artificial brain '10 years away'". BBC. BBC News.

- ^ Epstein, Robert (18 May 2016). "The empty brain". Aeon. Retrieved 2021-04-04.

- ^ Theil, Stefan (2015-10-01). "Why the human brain project went wrong and how to fix it". Scientific American. Retrieved 2021-04-04.

- ^ Chalmers, David (1995). "Facing up to the problem of consciousness". Journal of Consciousness Studies. 2 (3): 200–219.

- ^ Kastrup, Bernardo (2018). "The Universe in Consciousness". Journal of Consciousness Studies. 25 (5–6): 125–155.

- ^ Cortese, Franco (June 17, 2013). "Clearing Up Misconceptions About Mind Uploading". h+ Media.

- ^ Yoonsuck Choe; Jaerock Kwon; Ji Ryang Chung (2012). "Time, Consciousness, and Mind Uploading" (PDF). International Journal of Machine Consciousness. 04 (1): 257. doi:10.1142/S179384301240015X.

- ^ "The Duplicates Paradox (The Duplicates Problem)". benbest.com.

- ^ Augustine of Hippo. "Book 11, Chapter 26". City of God.

- ^ Descartes, René (1637). "4". Discourse on the Method.

- ^ Robinson, William (2023), "Epiphenomenalism", in Zalta, Edward N.; Nodelman, Uri (eds.), The Stanford Encyclopedia of Philosophy (Summer 2023 ed.), Metaphysics Research Lab, Stanford University, retrieved 2024-05-16

- ^ a b Schneider, Susan (March 2, 2014). "The Philosophy of 'Her'". The New York Times. Retrieved May 7, 2014.

- ^ Squire, Larry R. (2008). Fundamental neuroscience (3rd ed.). Amsterdam: Elsevier / Academic Press. ISBN 9780123740199. OCLC 190867431.

- ^ Hughes, James (2013). Transhumanism and Personal Identity. Wiley.

- ^ Wiley, Keith (March 20, 2014). "Response to Susan Schneider's "Philosophy of 'Her"". H+Magazine. Retrieved 7 May 2014.

- ^ Wiley, Keith (September 2014). A Taxonomy and Metaphysics of Mind-Uploading (1st ed.). Humanity+ Press and Alautun Press. ISBN 978-0692279847. Retrieved 16 October 2014.

- ^ Ruparel, Bhavik (2018-07-30). "On Achieving Immortality". medium.com. Retrieved 2018-07-31.

- ^ Hauskeller, Michael (2012). "My Brain, my Mind, and I: Some Philosophical Problems of Mind-Uploading". Academia.edu. 4 (1): 187–200.

- ^ Dvorsky, George (April 17, 2013). "You Might Never Upload Your Brain Into a Computer". io9.

- ^ Oto, Brandon (2011). "Seeking normative guidelines for novel future forms of consciousness" (PDF). University of California, Santa Cruz. Archived from the original (PDF) on 2014-01-03. Retrieved 2014-01-03.

- ^ Goertzel, Ben (2012). "When Should Two Minds Be Considered Versions of One Another?" (PDF).

- ^ Morem, Sally (April 21, 2013). "Goertzel Contra Dvorsky on Mind Uploading". h+ Media.

- ^ Rothblatt, Martine (2012). "The Terasem Mind Uploading Experiment" (PDF). International Journal of Machine Consciousness. 4 (1): 141–158. doi:10.1142/S1793843012400070. Archived from the original (PDF) on 2013-08-27.

- ^ Hopkins, Patrick D. (2012). "Why Uploading Will Not Work, or, the Ghosts Haunting Transhumanism" (PDF). International Journal of Machine Consciousness. 4 (1): 229–243. doi:10.1142/S1793843012400136. Archived from the original (PDF) on 2012-09-06.

- ^ a b c d e Sandberg, Anders (14 April 2014). "Ethics of brain emulations". Journal of Experimental & Theoretical Artificial Intelligence. 26 (3): 439–457. doi:10.1080/0952813X.2014.895113. S2CID 14545074.

- ^ a b Bancroft, Tyler D. (Aug 2013). "Ethical Aspects of Computational Neuroscience". Neuroethics. 6 (2): 415–418. doi:10.1007/s12152-012-9163-7. ISSN 1874-5504. S2CID 145511899.

- ^ Chalmers, David (2022). Reality+: Virtual Worlds and the Problems of Philosophy. New York: W. W. Norton & Company. ISBN 9780393635805.

- ^ Bostrom, Nick (2014). Superintelligence: Paths, Dangers, Strategies. Oxford, England: Oxford University Press. ISBN 978-0199678112.

- ^ a b c d e f g h i Eckersley, Peter; Sandberg, Anders (Dec 2013). "Is Brain Emulation Dangerous?". Journal of Artificial General Intelligence. 4 (3): 170–194. Bibcode:2013JAGI....4..170E. doi:10.2478/jagi-2013-0011. ISSN 1946-0163.

- ^ a b Muzyka, Kamil (Dec 2013). "The Outline of Personhood Law Regarding Artificial Intelligences and Emulated Human Entities". Journal of Artificial General Intelligence. 4 (3): 164–169. Bibcode:2013JAGI....4..164M. doi:10.2478/jagi-2013-0010. ISSN 1946-0163.

- ^ Laakasuo, Michael; Sundvall, Jukka; Drosinou, Marianna; et al. (2023). "Would you exchange your soul for immortality? – Existential Meaning and Afterlife Beliefs Predict Mind Upload Approval". Frontiers in Psychology. 14. doi:10.3389/fpsyg.2023.1254846. PMC 10757642. PMID 38162973.

- ^ Hurtado Hurtado, Joshua (2022-07-18). "Envisioning postmortal futures: six archetypes on future societal approaches to seeking immortality". Mortality. 29: 18–36. doi:10.1080/13576275.2022.2100250. ISSN 1357-6275. S2CID 250650618.

- ^ a b Hanson, Robin (2016). The Age of Em. Oxford, England: Oxford University Press. p. 528. ISBN 9780198754626.

- ^ Miller, Kenneth D. (October 10, 2015). "Will You Ever Be Able to Upload Your Brain?". The New York Times.

- ^ Shulman, Carl; Sandberg, Anders (2010). Mainzer, Klaus (ed.). "Implications of a Software-Limited Singularity" (PDF). ECAP10: VIII European Conference on Computing and Philosophy. Retrieved 17 May 2014.

- ^ a b Hanson, Robin (26 Nov 2009). "Bad Emulation Advance". Overcoming Bias. Retrieved 28 June 2014.

- ^ Muehlhauser, Luke; Salamon, Anna (2012). "Intelligence Explosion: Evidence and Import" (PDF). In Eden, Amnon; Søraker, Johnny; Moor, James H.; Steinhart, Eric (eds.). Singularity Hypotheses: A Scientific and Philosophical Assessment. Springer.

- ^ a b c Salamon, Anna; Muehlhauser, Luke (2012). "Singularity Summit 2011 Workshop Report" (PDF). Machine Intelligence Research Institute. Retrieved 28 June 2014.

- ^ a b c d e f g h i j k l m Bostrom, Nick (2014). "Ch. 14: The strategic picture". Superintelligence: Paths, Dangers, Strategies. Oxford University Press. ISBN 978-0199678112.

- ^ Russell, Stuart (2019). Human Compatible: Artificial Intelligence and the Problem of Control. Viking Press. ISBN 978-0-525-55861-3. OCLC 1113410915.

- ^ Moravec, Hans (1979). "Today's Computers, Intelligent Machines and Our Future". Field Robotics Center, Robotics Institute, Carnegie Mellon University. Archived from the original on 2022-12-19. wikidata

- ^ Moravec, Hans (1988). Mind Children.

- ^ "'Mind Uploading' & Digital Immortality May Be Reality By 2045, Futurists Say". Huffington Post. June 18, 2013. Retrieved March 8, 2024.

- ^ Langford, David; Stableford, Brian (2022). "Upload". In Clute, John; Langford, David; Sleight, Graham (eds.). The Encyclopedia of Science Fiction (4th ed.). Retrieved 2024-03-29.

- ^ Webb, Stephen (2017). "Mind Uploading". All the Wonder that Would Be: Exploring Past Notions of the Future. Science and Fiction. Springer. pp. 276–278. doi:10.1007/978-3-319-51759-9_10. ISBN 978-3-319-51759-9.

- ^ a b

- Fischer, John Martin; Curl, Ruth (1996). "Philosophical Models of Immortality in Science Fiction". In Slusser, George; Westfahl, Gary; Rabkin, Eric S. (eds.). Immortal Engines: Life Extension and Immortality in Science Fiction and Fantasy. Athens, Georgia: University of Georgia Press. pp. 3–12. ISBN 0-8203-1733-0. OCLC 34319944.

- Fischer, John Martin; Curl, Ruth (2009). "Appendix to Chapter 6: Philosophical Models of Immortality in Science Fiction". In Fischer, John Martin (ed.). Our Stories: Essays on Life, Death, and Free Will. Oxford University Press. pp. 93–101. ISBN 978-0-19-537495-7.

- ^ Langford, David (2005). "Computers". In Westfahl, Gary (ed.). The Greenwood Encyclopedia of Science Fiction and Fantasy: Themes, Works, and Wonders. Greenwood Publishing Group. p. 154. ISBN 978-0-313-32951-7.

- ^ Blackford, Russell (2017). "Reshaping the Human". Science Fiction and the Moral Imagination: Visions, Minds, Ethics. Science and Fiction. Springer. pp. 173–174. ISBN 978-3-319-61685-8.

- ^ Booker, M. Keith (2014). "Artificial Intelligence (AI)". Historical Dictionary of Science Fiction in Literature. Rowman & Littlefield. p. 28. ISBN 978-0-8108-7884-6.

Cyberpunk writers and their successors have also frequently imagined the uploading of human minds into computers, thus creating a special sort of artificial intelligence that can free individuals of the limitations of biological bodies, a notion that would be notably extended in the work of Greg Egan.

- ^ Westfahl, Gary (2005). "Immortality and Longevity". In Westfahl, Gary (ed.). The Greenwood Encyclopedia of Science Fiction and Fantasy: Themes, Works, and Wonders. Greenwood Publishing Group. pp. 418–420. ISBN 978-0-313-32951-7.