In probability theory and statistics , complex random variables are a generalization of real-valued random variables to complex numbers , i.e. the possible values a complex random variable may take are complex numbers.[ 1] distribution of one complex random variable may be interpreted as the joint distribution of two real random variables.

Some concepts of real random variables have a straightforward generalization to complex random variables—e.g., the definition of the mean of a complex random variable. Other concepts are unique to complex random variables.

Applications of complex random variables are found in digital signal processing ,[ 2] quadrature amplitude modulation and information theory .

A complex random variable

Z

{\displaystyle Z}

probability space

(

Ω

,

F

,

P

)

{\displaystyle (\Omega ,{\mathcal {F}},P)}

function

Z

:

Ω

→

C

{\displaystyle Z\colon \Omega \rightarrow \mathbb {C} }

ℜ

(

Z

)

{\displaystyle \Re {(Z)}}

ℑ

(

Z

)

{\displaystyle \Im {(Z)}}

random variables on

(

Ω

,

F

,

P

)

{\displaystyle (\Omega ,{\mathcal {F}},P)}

Consider a random variable that may take only the three complex values

1

+

i

,

1

−

i

,

2

{\displaystyle 1+i,1-i,2}

Probability

P

(

z

)

{\displaystyle P(z)}

Value

z

{\displaystyle z}

1

4

{\displaystyle {\frac {1}{4}}}

1

+

i

{\displaystyle 1+i}

1

4

{\displaystyle {\frac {1}{4}}}

1

−

i

{\displaystyle 1-i}

1

2

{\displaystyle {\frac {1}{2}}}

2

{\displaystyle 2}

The expectation of this random variable may be simply calculated:

E

[

Z

]

=

1

4

(

1

+

i

)

+

1

4

(

1

−

i

)

+

1

2

2

=

3

2

.

{\displaystyle \operatorname {E} [Z]={\frac {1}{4}}(1+i)+{\frac {1}{4}}(1-i)+{\frac {1}{2}}2={\frac {3}{2}}.}

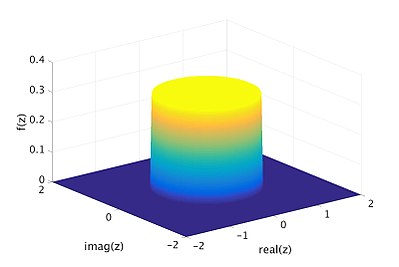

Another example of a complex random variable is the uniform distribution over the filled unit circle, i.e. the set

{

z

∈

C

∣

|

z

|

≤

1

}

{\displaystyle \{z\in \mathbb {C} \mid |z|\leq 1\}}

probability density function is defined. The density function is shown as the yellow disk and dark blue base in the following figure.

Complex normal distribution [ edit ] Complex Gaussian random variables are often encountered in applications. They are a straightforward generalization of real Gaussian random variables. The following plot shows an example of the distribution of such a variable.

Cumulative distribution function [ edit ] The generalization of the cumulative distribution function from real to complex random variables is not obvious because expressions of the form

P

(

Z

≤

1

+

3

i

)

{\displaystyle P(Z\leq 1+3i)}

P

(

ℜ

(

Z

)

≤

1

,

ℑ

(

Z

)

≤

3

)

{\displaystyle P(\Re {(Z)}\leq 1,\Im {(Z)}\leq 3)}

F

Z

:

C

→

[

0

,

1

]

{\displaystyle F_{Z}:\mathbb {C} \to [0,1]}

joint distribution of their real and imaginary parts:

F

Z

(

z

)

=

F

ℜ

(

Z

)

,

ℑ

(

Z

)

(

ℜ

(

z

)

,

ℑ

(

z

)

)

=

P

(

ℜ

(

Z

)

≤

ℜ

(

z

)

,

ℑ

(

Z

)

≤

ℑ

(

z

)

)

{\displaystyle F_{Z}(z)=F_{\Re {(Z)},\Im {(Z)}}(\Re {(z)},\Im {(z)})=P(\Re {(Z)}\leq \Re {(z)},\Im {(Z)}\leq \Im {(z)})}

Eq.1

Probability density function [ edit ] The probability density function of a complex random variable is defined as

f

Z

(

z

)

=

f

ℜ

(

Z

)

,

ℑ

(

Z

)

(

ℜ

(

z

)

,

ℑ

(

z

)

)

{\displaystyle f_{Z}(z)=f_{\Re {(Z)},\Im {(Z)}}(\Re {(z)},\Im {(z)})}

z

∈

C

{\displaystyle z\in \mathbb {C} }

(

ℜ

(

z

)

,

ℑ

(

z

)

)

{\displaystyle (\Re {(z)},\Im {(z)})}

An equivalent definition is given by

f

Z

(

z

)

=

∂

2

∂

x

∂

y

P

(

ℜ

(

Z

)

≤

x

,

ℑ

(

Z

)

≤

y

)

{\displaystyle f_{Z}(z)={\frac {\partial ^{2}}{\partial x\partial y}}P(\Re {(Z)}\leq x,\Im {(Z)}\leq y)}

x

=

ℜ

(

z

)

{\displaystyle x=\Re {(z)}}

y

=

ℑ

(

z

)

{\displaystyle y=\Im {(z)}}

As in the real case the density function may not exist.

The expectation of a complex random variable is defined based on the definition of the expectation of a real random variable:[ 3] : p. 112

E

[

Z

]

=

E

[

ℜ

(

Z

)

]

+

i

E

[

ℑ

(

Z

)

]

{\displaystyle \operatorname {E} [Z]=\operatorname {E} [\Re {(Z)}]+i\operatorname {E} [\Im {(Z)}]}

Eq.2

Note that the expectation of a complex random variable does not exist if

E

[

ℜ

(

Z

)

]

{\displaystyle \operatorname {E} [\Re {(Z)}]}

E

[

ℑ

(

Z

)

]

{\displaystyle \operatorname {E} [\Im {(Z)}]}

If the complex random variable

Z

{\displaystyle Z}

f

Z

(

z

)

{\displaystyle f_{Z}(z)}

E

[

Z

]

=

∬

C

z

⋅

f

Z

(

z

)

d

x

d

y

{\displaystyle \operatorname {E} [Z]=\iint _{\mathbb {C} }z\cdot f_{Z}(z)\,dx\,dy}

If the complex random variable

Z

{\displaystyle Z}

probability mass function

p

Z

(

z

)

{\displaystyle p_{Z}(z)}

E

[

Z

]

=

∑

z

∈

Z

z

⋅

p

Z

(

z

)

{\displaystyle \operatorname {E} [Z]=\sum _{z\in \mathbb {Z} }z\cdot p_{Z}(z)}

Properties Whenever the expectation of a complex random variable exists, taking the expectation and complex conjugation commute:

E

[

Z

]

¯

=

E

[

Z

¯

]

.

{\displaystyle {\overline {\operatorname {E} [Z]}}=\operatorname {E} [{\overline {Z}}].}

The expected value operator

E

[

⋅

]

{\displaystyle \operatorname {E} [\cdot ]}

linear in the sense that

E

[

a

Z

+

b

W

]

=

a

E

[

Z

]

+

b

E

[

W

]

{\displaystyle \operatorname {E} [aZ+bW]=a\operatorname {E} [Z]+b\operatorname {E} [W]}

for any complex coefficients

a

,

b

{\displaystyle a,b}

Z

{\displaystyle Z}

W

{\displaystyle W}

independent .

Variance and pseudo-variance [ edit ] The variance is defined in terms of absolute squares as:[ 3] : 117

K

Z

Z

=

Var

[

Z

]

=

E

[

|

Z

−

E

[

Z

]

|

2

]

=

E

[

|

Z

|

2

]

−

|

E

[

Z

]

|

2

{\displaystyle \operatorname {K} _{ZZ}=\operatorname {Var} [Z]=\operatorname {E} \left[\left|Z-\operatorname {E} [Z]\right|^{2}\right]=\operatorname {E} [|Z|^{2}]-\left|\operatorname {E} [Z]\right|^{2}}

Eq.3

Properties The variance is always a nonnegative real number. It is equal to the sum of the variances of the real and imaginary part of the complex random variable:

Var

[

Z

]

=

Var

[

ℜ

(

Z

)

]

+

Var

[

ℑ

(

Z

)

]

.

{\displaystyle \operatorname {Var} [Z]=\operatorname {Var} [\Re {(Z)}]+\operatorname {Var} [\Im {(Z)}].}

The variance of a linear combination of complex random variables may be calculated using the following formula:

Var

[

∑

k

=

1

N

a

k

Z

k

]

=

∑

i

=

1

N

∑

j

=

1

N

a

i

a

j

¯

Cov

[

Z

i

,

Z

j

]

.

{\displaystyle \operatorname {Var} \left[\sum _{k=1}^{N}a_{k}Z_{k}\right]=\sum _{i=1}^{N}\sum _{j=1}^{N}a_{i}{\overline {a_{j}}}\operatorname {Cov} [Z_{i},Z_{j}].}

The pseudo-variance is a special case of the pseudo-covariance and is defined in terms of ordinary complex squares , given by:

J

Z

Z

=

E

[

(

Z

−

E

[

Z

]

)

2

]

=

E

[

Z

2

]

−

(

E

[

Z

]

)

2

{\displaystyle \operatorname {J} _{ZZ}=\operatorname {E} [(Z-\operatorname {E} [Z])^{2}]=\operatorname {E} [Z^{2}]-(\operatorname {E} [Z])^{2}}

Eq.4

Unlike the variance of

Z

{\displaystyle Z}

Z

{\displaystyle Z}

Covariance matrix of real and imaginary parts [ edit ] For a general complex random variable, the pair

(

ℜ

(

Z

)

,

ℑ

(

Z

)

)

{\displaystyle (\Re {(Z)},\Im {(Z)})}

covariance matrix of the form:

[

Var

[

ℜ

(

Z

)

]

Cov

[

ℑ

(

Z

)

,

ℜ

(

Z

)

]

Cov

[

ℜ

(

Z

)

,

ℑ

(

Z

)

]

Var

[

ℑ

(

Z

)

]

]

{\displaystyle {\begin{bmatrix}\operatorname {Var} [\Re {(Z)}]&\operatorname {Cov} [\Im {(Z)},\Re {(Z)}]\\\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]&\operatorname {Var} [\Im {(Z)}]\end{bmatrix}}}

The matrix is symmetric, so

Cov

[

ℜ

(

Z

)

,

ℑ

(

Z

)

]

=

Cov

[

ℑ

(

Z

)

,

ℜ

(

Z

)

]

{\displaystyle \operatorname {Cov} [\Re {(Z)},\Im {(Z)}]=\operatorname {Cov} [\Im {(Z)},\Re {(Z)}]}

Its elements equal:

Var

[

ℜ

(

Z

)

]

=

1

2

Re

(

K

Z

Z

+

J

Z

Z

)

Var

[

ℑ

(

Z

)

]

=

1

2

Re

(

K

Z

Z

−

J

Z

Z

)

Cov

[

ℜ

(

Z

)

,

ℑ

(

Z

)

]

=

1

2

Im

(

J

Z

Z

)

{\displaystyle {\begin{aligned}&\operatorname {Var} [\Re {(Z)}]={\tfrac {1}{2}}\operatorname {Re} (\operatorname {K} _{ZZ}+\operatorname {J} _{ZZ})\\&\operatorname {Var} [\Im {(Z)}]={\tfrac {1}{2}}\operatorname {Re} (\operatorname {K} _{ZZ}-\operatorname {J} _{ZZ})\\&\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]={\tfrac {1}{2}}\operatorname {Im} (\operatorname {J} _{ZZ})\\\end{aligned}}}

Conversely:

K

Z

Z

=

Var

[

ℜ

(

Z

)

]

+

Var

[

ℑ

(

Z

)

]

J

Z

Z

=

Var

[

ℜ

(

Z

)

]

−

Var

[

ℑ

(

Z

)

]

+

i

2

Cov

[

ℜ

(

Z

)

,

ℑ

(

Z

)

]

{\displaystyle {\begin{aligned}&\operatorname {K} _{ZZ}=\operatorname {Var} [\Re {(Z)}]+\operatorname {Var} [\Im {(Z)}]\\&\operatorname {J} _{ZZ}=\operatorname {Var} [\Re {(Z)}]-\operatorname {Var} [\Im {(Z)}]+i2\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]\end{aligned}}}

Covariance and pseudo-covariance [ edit ] The covariance between two complex random variables

Z

,

W

{\displaystyle Z,W}

[ 3] : 119

K

Z

W

=

Cov

[

Z

,

W

]

=

E

[

(

Z

−

E

[

Z

]

)

(

W

−

E

[

W

]

)

¯

]

=

E

[

Z

W

¯

]

−

E

[

Z

]

E

[

W

¯

]

{\displaystyle \operatorname {K} _{ZW}=\operatorname {Cov} [Z,W]=\operatorname {E} [(Z-\operatorname {E} [Z]){\overline {(W-\operatorname {E} [W])}}]=\operatorname {E} [Z{\overline {W}}]-\operatorname {E} [Z]\operatorname {E} [{\overline {W}}]}

Eq.5

Notice the complex conjugation of the second factor in the definition.

pseudo-covariance (also called complementary variance ):

J

Z

W

=

Cov

[

Z

,

W

¯

]

=

E

[

(

Z

−

E

[

Z

]

)

(

W

−

E

[

W

]

)

]

=

E

[

Z

W

]

−

E

[

Z

]

E

[

W

]

{\displaystyle \operatorname {J} _{ZW}=\operatorname {Cov} [Z,{\overline {W}}]=\operatorname {E} [(Z-\operatorname {E} [Z])(W-\operatorname {E} [W])]=\operatorname {E} [ZW]-\operatorname {E} [Z]\operatorname {E} [W]}

Eq.6

The second order statistics are fully characterized by the covariance and the pseudo-covariance.

Properties The covariance has the following properties:

Cov

[

Z

,

W

]

=

Cov

[

W

,

Z

]

¯

{\displaystyle \operatorname {Cov} [Z,W]={\overline {\operatorname {Cov} [W,Z]}}}

Cov

[

α

Z

,

W

]

=

α

Cov

[

Z

,

W

]

{\displaystyle \operatorname {Cov} [\alpha Z,W]=\alpha \operatorname {Cov} [Z,W]}

Cov

[

Z

,

α

W

]

=

α

¯

Cov

[

Z

,

W

]

{\displaystyle \operatorname {Cov} [Z,\alpha W]={\overline {\alpha }}\operatorname {Cov} [Z,W]}

Cov

[

Z

1

+

Z

2

,

W

]

=

Cov

[

Z

1

,

W

]

+

Cov

[

Z

2

,

W

]

{\displaystyle \operatorname {Cov} [Z_{1}+Z_{2},W]=\operatorname {Cov} [Z_{1},W]+\operatorname {Cov} [Z_{2},W]}

Cov

[

Z

,

W

1

+

W

2

]

=

Cov

[

Z

,

W

1

]

+

Cov

[

Z

,

W

2

]

{\displaystyle \operatorname {Cov} [Z,W_{1}+W_{2}]=\operatorname {Cov} [Z,W_{1}]+\operatorname {Cov} [Z,W_{2}]}

Cov

[

Z

,

Z

]

=

Var

[

Z

]

{\displaystyle \operatorname {Cov} [Z,Z]={\operatorname {Var} [Z]}}

Uncorrelatedness: two complex random variables

Z

{\displaystyle Z}

W

{\displaystyle W}

uncorrelated if

K

Z

W

=

J

Z

W

=

0

{\displaystyle \operatorname {K} _{ZW}=\operatorname {J} _{ZW}=0}

uncorrelatedness (probability theory) ).

Orthogonality: two complex random variables

Z

{\displaystyle Z}

W

{\displaystyle W}

orthogonal if

E

[

Z

W

¯

]

=

0

{\displaystyle \operatorname {E} [Z{\overline {W}}]=0}

Circular symmetry of complex random variables is a common assumption used in the field of wireless communication. A typical example of a circular symmetric complex random variable is the complex Gaussian random variable with zero mean and zero pseudo-covariance matrix.

A complex random variable

Z

{\displaystyle Z}

ϕ

∈

[

−

π

,

π

]

{\displaystyle \phi \in [-\pi ,\pi ]}

e

i

ϕ

Z

{\displaystyle e^{\mathrm {i} \phi }Z}

Z

{\displaystyle Z}

Properties By definition, a circularly symmetric complex random variable has

E

[

Z

]

=

E

[

e

i

ϕ

Z

]

=

e

i

ϕ

E

[

Z

]

{\displaystyle \operatorname {E} [Z]=\operatorname {E} [e^{\mathrm {i} \phi }Z]=e^{\mathrm {i} \phi }\operatorname {E} [Z]}

ϕ

{\displaystyle \phi }

Thus the expectation of a circularly symmetric complex random variable can only be either zero or undefined.

Additionally,

E

[

Z

Z

]

=

E

[

e

i

ϕ

Z

e

i

ϕ

Z

]

=

e

2

i

ϕ

E

[

Z

Z

]

{\displaystyle \operatorname {E} [ZZ]=\operatorname {E} [e^{\mathrm {i} \phi }Ze^{\mathrm {i} \phi }Z]=e^{\mathrm {2} i\phi }\operatorname {E} [ZZ]}

ϕ

{\displaystyle \phi }

Thus the pseudo-variance of a circularly symmetric complex random variable can only be zero.

If

Z

{\displaystyle Z}

e

i

ϕ

Z

{\displaystyle e^{\mathrm {i} \phi }Z}

Z

{\displaystyle Z}

[

−

π

,

π

]

{\displaystyle [-\pi ,\pi ]}

Z

{\displaystyle Z}

[ 4]

Proper complex random variables [ edit ] The concept of proper random variables is unique to complex random variables, and has no correspondent concept with real random variables.

A complex random variable

Z

{\displaystyle Z}

E

[

Z

]

=

0

{\displaystyle \operatorname {E} [Z]=0}

Var

[

Z

]

<

∞

{\displaystyle \operatorname {Var} [Z]<\infty }

E

[

Z

2

]

=

0

{\displaystyle \operatorname {E} [Z^{2}]=0}

This definition is equivalent to the following conditions. This means that a complex random variable is proper if, and only if:

E

[

Z

]

=

0

{\displaystyle \operatorname {E} [Z]=0}

E

[

ℜ

(

Z

)

2

]

=

E

[

ℑ

(

Z

)

2

]

≠

∞

{\displaystyle \operatorname {E} [\Re {(Z)}^{2}]=\operatorname {E} [\Im {(Z)}^{2}]\neq \infty }

E

[

ℜ

(

Z

)

ℑ

(

Z

)

]

=

0

{\displaystyle \operatorname {E} [\Re {(Z)}\Im {(Z)}]=0}

Theorem — Every circularly symmetric complex random variable with finite variance is proper.

For a proper complex random variable, the covariance matrix of the pair

(

ℜ

(

Z

)

,

ℑ

(

Z

)

)

{\displaystyle (\Re {(Z)},\Im {(Z)})}

[

1

2

Var

[

Z

]

0

0

1

2

Var

[

Z

]

]

{\displaystyle {\begin{bmatrix}{\frac {1}{2}}\operatorname {Var} [Z]&0\\0&{\frac {1}{2}}\operatorname {Var} [Z]\end{bmatrix}}}

I.e.:

Var

[

ℜ

(

Z

)

]

=

Var

[

ℑ

(

Z

)

]

=

1

2

Var

[

Z

]

Cov

[

ℜ

(

Z

)

,

ℑ

(

Z

)

]

=

0

{\displaystyle {\begin{aligned}&\operatorname {Var} [\Re {(Z)}]=\operatorname {Var} [\Im {(Z)}]={\tfrac {1}{2}}\operatorname {Var} [Z]\\&\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]=0\\\end{aligned}}}

Cauchy-Schwarz inequality [ edit ] The Cauchy-Schwarz inequality for complex random variables, which can be derived using the Triangle inequality and Hölder's inequality , is

|

E

[

Z

W

¯

]

|

2

≤

|

E

[

|

Z

W

¯

|

]

|

2

≤

E

[

|

Z

|

2

]

E

[

|

W

|

2

]

{\displaystyle \left|\operatorname {E} \left[Z{\overline {W}}\right]\right|^{2}\leq \left|\operatorname {E} \left[\left|Z{\overline {W}}\right|\right]\right|^{2}\leq \operatorname {E} \left[|Z|^{2}\right]\operatorname {E} \left[|W|^{2}\right]}

Characteristic function [ edit ] The characteristic function of a complex random variable is a function

C

→

C

{\displaystyle \mathbb {C} \to \mathbb {C} }

φ

Z

(

ω

)

=

E

[

e

i

ℜ

(

ω

¯

Z

)

]

=

E

[

e

i

(

ℜ

(

ω

)

ℜ

(

Z

)

+

ℑ

(

ω

)

ℑ

(

Z

)

)

]

.

{\displaystyle \varphi _{Z}(\omega )=\operatorname {E} \left[e^{i\Re {({\overline {\omega }}Z)}}\right]=\operatorname {E} \left[e^{i(\Re {(\omega )}\Re {(Z)}+\Im {(\omega )}\Im {(Z)})}\right].}

^ Eriksson, Jan; Ollila, Esa; Koivunen, Visa (2009). Statistics for complex random variables revisited . 2009 IEEE International Conference on Acoustics, Speech and Signal Processing. Taipei, Taiwan: Institute of Electrical and Electronics Engineers . pp. 3565– 3568. doi :10.1109/ICASSP.2009.4960396 . ^ Lapidoth, A. (2009). A Foundation in Digital Communication . Cambridge University Press. ISBN 9780521193955 ^ a b c Park,Kun Il (2018). Fundamentals of Probability and Stochastic Processes with Applications to Communications . Springer. ISBN 978-3-319-68074-3 ^ Peter J. Schreier, Louis L. Scharf (2011). Statistical Signal Processing of Complex-Valued Data . Cambridge University Press. ISBN 9780511815911

![{\displaystyle \operatorname {E} [Z]={\frac {1}{4}}(1+i)+{\frac {1}{4}}(1-i)+{\frac {1}{2}}2={\frac {3}{2}}.}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/c524ba54cdf76d00057ff202c9ef13d276797808)

![{\displaystyle F_{Z}:\mathbb {C} \to [0,1]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/a49f4f55d06b18465dbc269884ebf7747b9156f3)

![{\displaystyle \operatorname {E} [Z]=\operatorname {E} [\Re {(Z)}]+i\operatorname {E} [\Im {(Z)}]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/f6e1ff26c4d56190d3bcbbc0f5aaede2132ea4de)

![{\displaystyle \operatorname {E} [\Re {(Z)}]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/e844e16f165052318326c84418f6f397eef22c61)

![{\displaystyle \operatorname {E} [\Im {(Z)}]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/db17b20ce3199ef25f805f27c8473a08d3753378)

![{\displaystyle \operatorname {E} [Z]=\iint _{\mathbb {C} }z\cdot f_{Z}(z)\,dx\,dy}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/ce36d7e5aca36e4baed05d1ce7eb8be351a3da68)

![{\displaystyle \operatorname {E} [Z]=\sum _{z\in \mathbb {Z} }z\cdot p_{Z}(z)}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/8bd6feced28ac4cb07956d41e9b0476422f8b168)

![{\displaystyle {\overline {\operatorname {E} [Z]}}=\operatorname {E} [{\overline {Z}}].}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/d0f389d24b8d094d5cbcfe0587f73462936d18ff)

![{\displaystyle \operatorname {E} [\cdot ]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/0a71518eb57ffaf54c0c31bf94de5ac9d7ab11a1)

![{\displaystyle \operatorname {E} [aZ+bW]=a\operatorname {E} [Z]+b\operatorname {E} [W]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/6e642bbe3e97314e03a0804a0bcfeaaf4a49c7bc)

![{\displaystyle \operatorname {K} _{ZZ}=\operatorname {Var} [Z]=\operatorname {E} \left[\left|Z-\operatorname {E} [Z]\right|^{2}\right]=\operatorname {E} [|Z|^{2}]-\left|\operatorname {E} [Z]\right|^{2}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/20d080a97d05f3891fad48566952956c8bc33616)

![{\displaystyle \operatorname {Var} [Z]=\operatorname {Var} [\Re {(Z)}]+\operatorname {Var} [\Im {(Z)}].}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/add16b3176754caaa4cf67a75a16b4ac92b34c76)

![{\displaystyle \operatorname {Var} \left[\sum _{k=1}^{N}a_{k}Z_{k}\right]=\sum _{i=1}^{N}\sum _{j=1}^{N}a_{i}{\overline {a_{j}}}\operatorname {Cov} [Z_{i},Z_{j}].}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/f99e419701670b4886082740417b566f5c3f3123)

![{\displaystyle \operatorname {J} _{ZZ}=\operatorname {E} [(Z-\operatorname {E} [Z])^{2}]=\operatorname {E} [Z^{2}]-(\operatorname {E} [Z])^{2}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/4ff6bdd87afbafd84fa355854e5d4d7884b7cb5f)

![{\displaystyle {\begin{bmatrix}\operatorname {Var} [\Re {(Z)}]&\operatorname {Cov} [\Im {(Z)},\Re {(Z)}]\\\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]&\operatorname {Var} [\Im {(Z)}]\end{bmatrix}}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/ac330d152bea21f53db8f3ff194d894157b6fc3c)

![{\displaystyle \operatorname {Cov} [\Re {(Z)},\Im {(Z)}]=\operatorname {Cov} [\Im {(Z)},\Re {(Z)}]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/4c2fd64c83c920bd9f44dfcb5281682245f7d103)

![{\displaystyle {\begin{aligned}&\operatorname {Var} [\Re {(Z)}]={\tfrac {1}{2}}\operatorname {Re} (\operatorname {K} _{ZZ}+\operatorname {J} _{ZZ})\\&\operatorname {Var} [\Im {(Z)}]={\tfrac {1}{2}}\operatorname {Re} (\operatorname {K} _{ZZ}-\operatorname {J} _{ZZ})\\&\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]={\tfrac {1}{2}}\operatorname {Im} (\operatorname {J} _{ZZ})\\\end{aligned}}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/660a39d44691cd1f64d313944c0046eeaa5fe2cc)

![{\displaystyle {\begin{aligned}&\operatorname {K} _{ZZ}=\operatorname {Var} [\Re {(Z)}]+\operatorname {Var} [\Im {(Z)}]\\&\operatorname {J} _{ZZ}=\operatorname {Var} [\Re {(Z)}]-\operatorname {Var} [\Im {(Z)}]+i2\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]\end{aligned}}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/82090510b637bbd7629cffdef53b712e564ec908)

![{\displaystyle \operatorname {K} _{ZW}=\operatorname {Cov} [Z,W]=\operatorname {E} [(Z-\operatorname {E} [Z]){\overline {(W-\operatorname {E} [W])}}]=\operatorname {E} [Z{\overline {W}}]-\operatorname {E} [Z]\operatorname {E} [{\overline {W}}]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/f7a961696e5eb2780adc679459b7cf2c5000dd1e)

![{\displaystyle \operatorname {J} _{ZW}=\operatorname {Cov} [Z,{\overline {W}}]=\operatorname {E} [(Z-\operatorname {E} [Z])(W-\operatorname {E} [W])]=\operatorname {E} [ZW]-\operatorname {E} [Z]\operatorname {E} [W]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/f0850e20d08a280f61bd0e8db2c108656c09f163)

![{\displaystyle \operatorname {Cov} [Z,W]={\overline {\operatorname {Cov} [W,Z]}}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/e6c45f52a23a45d8c0489113054f2899fc7abe05)

![{\displaystyle \operatorname {Cov} [\alpha Z,W]=\alpha \operatorname {Cov} [Z,W]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/36d563b3f4a69e63da7c74774262faa56e9d8dbb)

![{\displaystyle \operatorname {Cov} [Z,\alpha W]={\overline {\alpha }}\operatorname {Cov} [Z,W]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/cdae2df03771a6783a07fce94daafe717183d542)

![{\displaystyle \operatorname {Cov} [Z_{1}+Z_{2},W]=\operatorname {Cov} [Z_{1},W]+\operatorname {Cov} [Z_{2},W]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/58046998dba3171529973d2cea6df46bd55cd24b)

![{\displaystyle \operatorname {Cov} [Z,W_{1}+W_{2}]=\operatorname {Cov} [Z,W_{1}]+\operatorname {Cov} [Z,W_{2}]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/21ba4eef59808fe6d651f74824fa7354b63de613)

![{\displaystyle \operatorname {Cov} [Z,Z]={\operatorname {Var} [Z]}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/1d0dd5a038210af5d291b2baf8d6f5f4017130ba)

![{\displaystyle \operatorname {E} [Z{\overline {W}}]=0}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/a9158c9d3364ee6a00a491d275b5aaa59fb06b08)

![{\displaystyle \phi \in [-\pi ,\pi ]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/bd67c7af835707a32f70e8ceb8388953705d2ab3)

![{\displaystyle \operatorname {E} [Z]=\operatorname {E} [e^{\mathrm {i} \phi }Z]=e^{\mathrm {i} \phi }\operatorname {E} [Z]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/7a0c0eaaf719d6a7f539ee634a8ada78a25fb814)

![{\displaystyle \operatorname {E} [ZZ]=\operatorname {E} [e^{\mathrm {i} \phi }Ze^{\mathrm {i} \phi }Z]=e^{\mathrm {2} i\phi }\operatorname {E} [ZZ]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/d399819a5ae5dbfd8d504a1771a23da9424712fe)

![{\displaystyle [-\pi ,\pi ]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/cb064fd6c55820cfa660eabeeda0f6e3c4935ae6)

![{\displaystyle \operatorname {E} [Z]=0}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/e6c80bd7c75894b696a91f03059d601eed06827f)

![{\displaystyle \operatorname {Var} [Z]<\infty }](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/a9598b3723f10a6af6b01d1a9e70dac49eaa054e)

![{\displaystyle \operatorname {E} [Z^{2}]=0}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/e0142e4405a348fd70966a8f62d4c260f5b30498)

![{\displaystyle \operatorname {E} [\Re {(Z)}^{2}]=\operatorname {E} [\Im {(Z)}^{2}]\neq \infty }](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/a24c7509a56aab23c94a541282cc12c0f2793e70)

![{\displaystyle \operatorname {E} [\Re {(Z)}\Im {(Z)}]=0}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/38f594a6d61e9568109957798cff69ca1239516b)

![{\displaystyle {\begin{bmatrix}{\frac {1}{2}}\operatorname {Var} [Z]&0\\0&{\frac {1}{2}}\operatorname {Var} [Z]\end{bmatrix}}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/409bedd03d9fcbd63fb8d7338d6dc4ad4fe69e86)

![{\displaystyle {\begin{aligned}&\operatorname {Var} [\Re {(Z)}]=\operatorname {Var} [\Im {(Z)}]={\tfrac {1}{2}}\operatorname {Var} [Z]\\&\operatorname {Cov} [\Re {(Z)},\Im {(Z)}]=0\\\end{aligned}}}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/9cc188b8b1273b1a3c41d381002ba1095ce961ec)

![{\displaystyle \left|\operatorname {E} \left[Z{\overline {W}}\right]\right|^{2}\leq \left|\operatorname {E} \left[\left|Z{\overline {W}}\right|\right]\right|^{2}\leq \operatorname {E} \left[|Z|^{2}\right]\operatorname {E} \left[|W|^{2}\right]}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/d22338d330f3e5c32c1fb25b14de2d80569cac86)

![{\displaystyle \varphi _{Z}(\omega )=\operatorname {E} \left[e^{i\Re {({\overline {\omega }}Z)}}\right]=\operatorname {E} \left[e^{i(\Re {(\omega )}\Re {(Z)}+\Im {(\omega )}\Im {(Z)})}\right].}](https://wikimedia.riteme.site/api/rest_v1/media/math/render/svg/88cf0381ac2f47d34cdf5a23923e0699a8b6515d)